kubesphere离线部署流程(图文版)

1. 部署机器规划

regitry: (10.118.0.30)

CPU: 8 MEM: 16GB DISK: 500GB

master x3: (10.118.0.31)

CPU: 8 MEM: 16GB DISK: 500GB

node x4: (10.118.0.34)

CPU: 16 MEM: 32GB DISK: 500GB

mysql x2: (10.118.0.40)

CPU: 24 MEM: 48GB DISK: 2000GB

nfs x1: (10.118.0.45)

CPU: 4 MEM: 8GB DISK: 2000GB

2. 镜像仓库机器准备(ip: 10.118.0.30)

2.1 安装相关rpm 包

# 安装基础rpm包(vim, nfs)

yum localinstall -y offline_base_yum/*.rpm

2.2 安装docker, docker-compose

离线版docker (参考: https://blog.csdn.net/shenaohuoli132/article/details/137522227)

vim /etc/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/bin/dockerd --selinux-enabled=false

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

# 拷贝绿色版docker 到 /opt/docker/ 进行安装

mkdir -p /opt/docker/

cp docker-20.10.9.tgz /opt/docker/

cd /opt/docker/

./install.sh docker-20.10.9.tgz

systemctl enable docker

systemctl start docker

systemctl status docker

允许使用http连接:

vim /etc/docker/daemon.json

{

"insecure-registries" : ["10.118.0.30:5000"]

}

2.3 安装离线版docker-compose

chmod 755 docker-compose

cp docker-compose /usr/bin/

2.4 启动镜像仓库

# 修改registry-ui的访问地址为当前部署机器ip 10.118.0.30

vim docker-compose.yml

# 修改 NGINX_PROXY_PASS_URL=http://10.118.0.30:5000

# 启动registry镜像仓库

docker-compose up -d

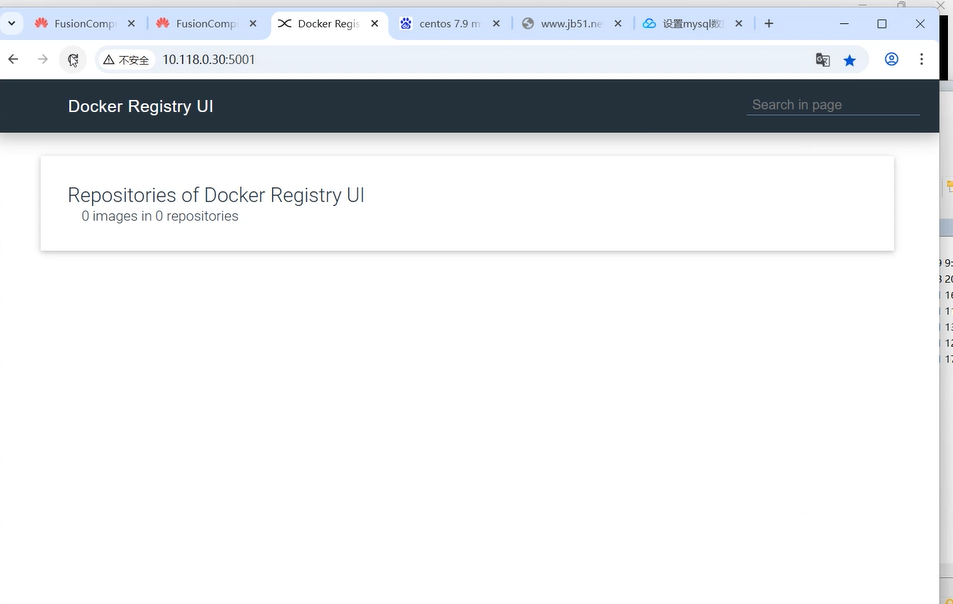

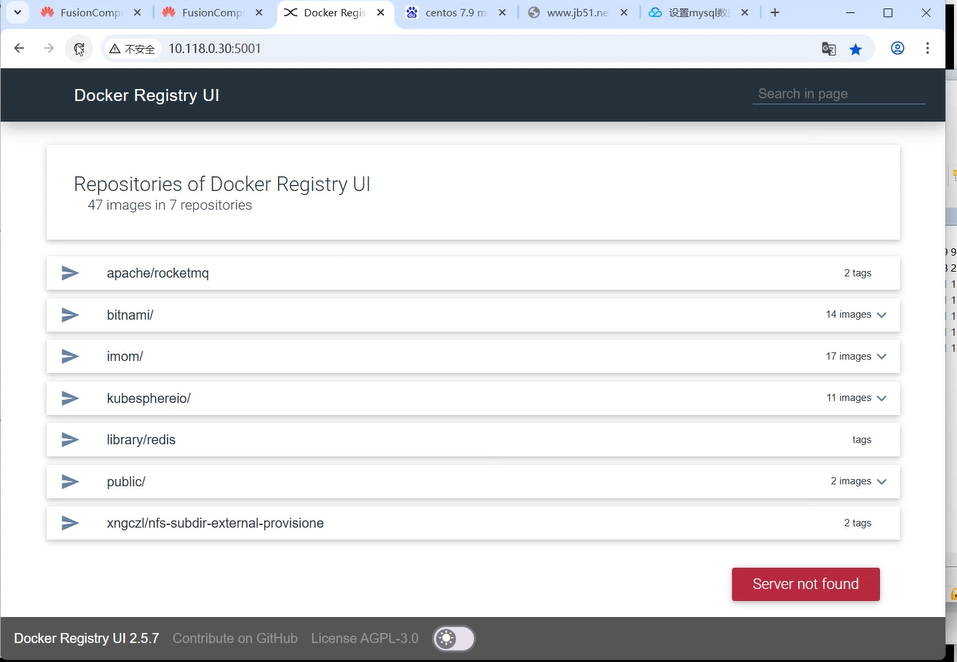

浏览器查看镜像仓库列表:

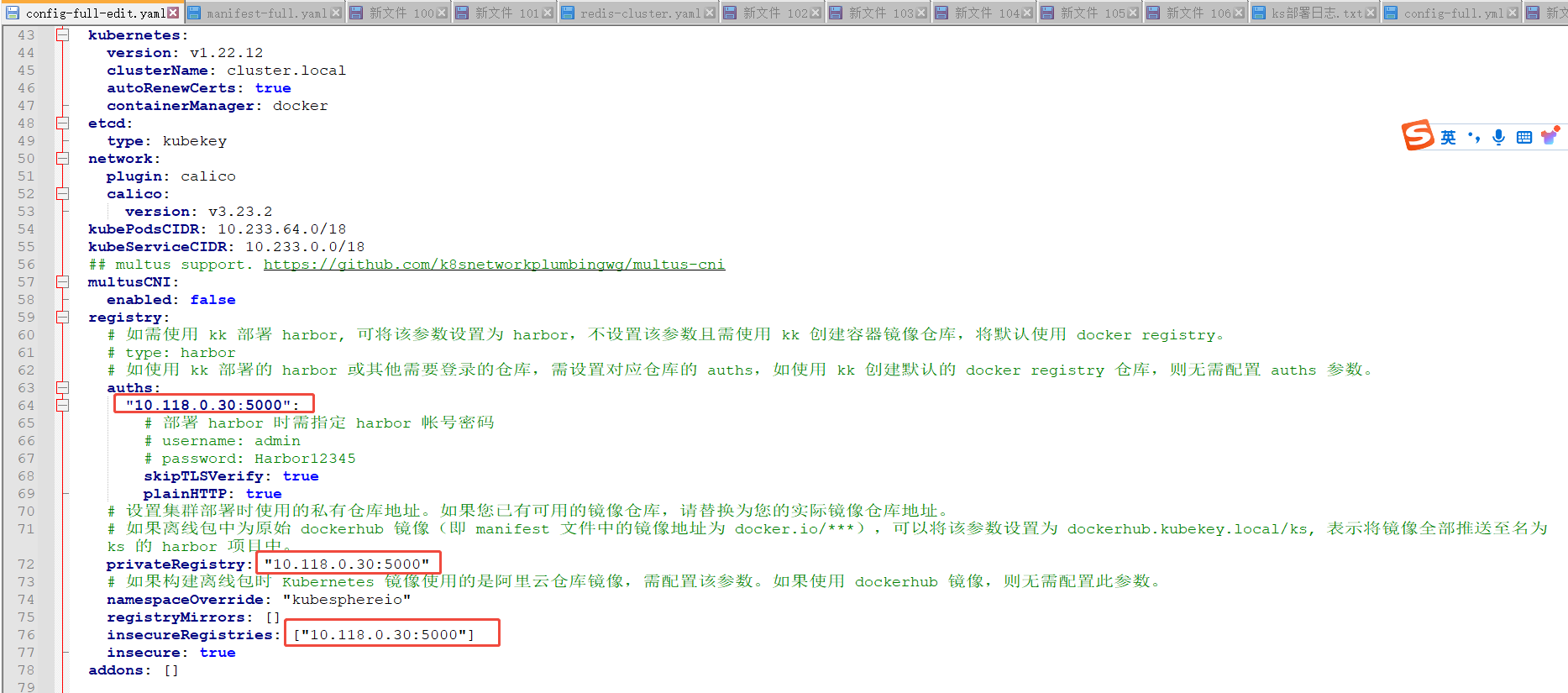

2.4 修改 config-full.yaml 配置文件

vim config-full.yaml:

设置使用http 方式的docker仓库地址:

2.5 导入所有镜像到镜像仓库

# 配置目标机器的连接信息, 镜像仓库地址

vim config-full.yaml

# helm 相关镜像

./kk artifact image push -f config-full.yaml -a kubesphere-only-helm.tar.gz

# imom 相关镜像(先rm -rf kubekey)

rm -rf kubekey

./kk artifact image push -f config-full.yaml -a kubesphere-imom.tar.gz

# k8s 相关镜像(先rm -rf kubekey)

rm -rf kubekey

./kk artifact image push -f config-full.yaml -a kubesphere-full.tar.gz

浏览镜像仓库, 确认相关镜像已被导入:

3. 安装k8s/ks集群

3.1 准备目标机器环境(master1, master2, master3, node1, node2, node3, node4上执行)

# 安装基础 依赖rpm包

yum localinstall -y offline_base_yum/*.rpm

# 安装k8s 依赖rpm包

yum localinstall -y offline_k8s_yum/*.rpm

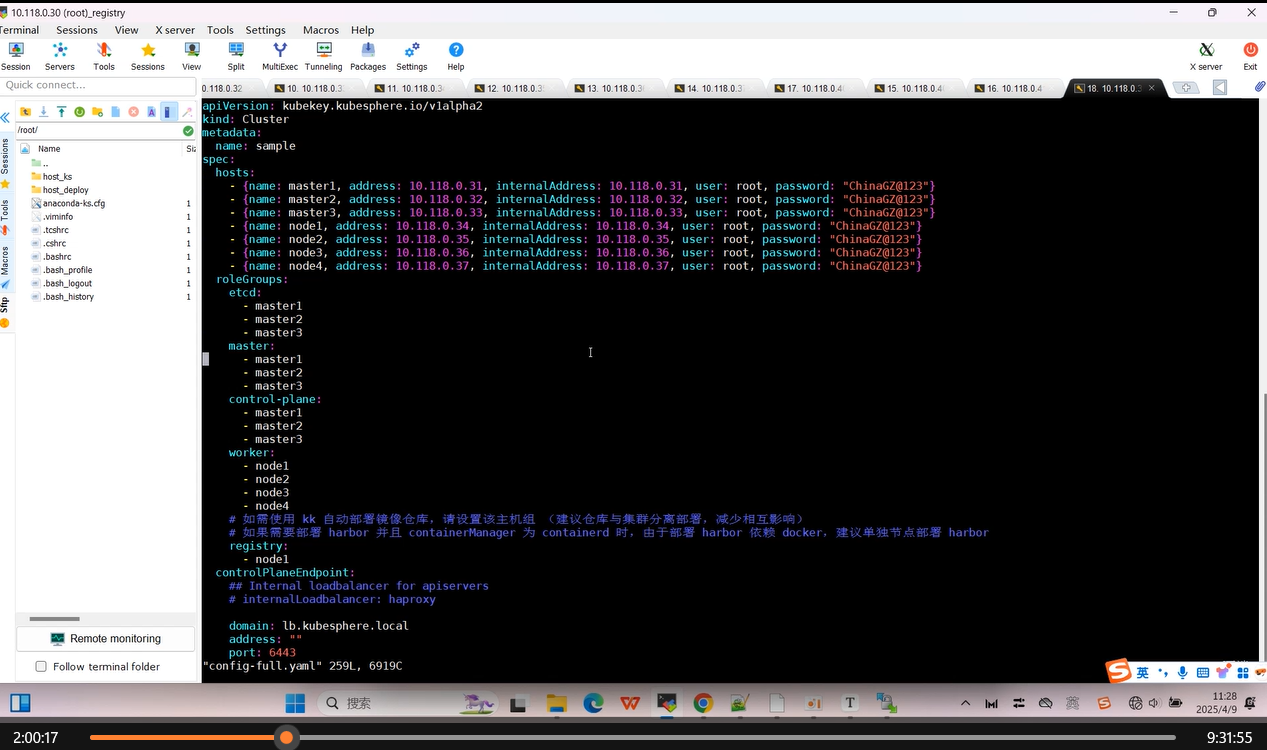

3.2 安装k8s集群(部署机器执行,ip: 10.118.0.30)

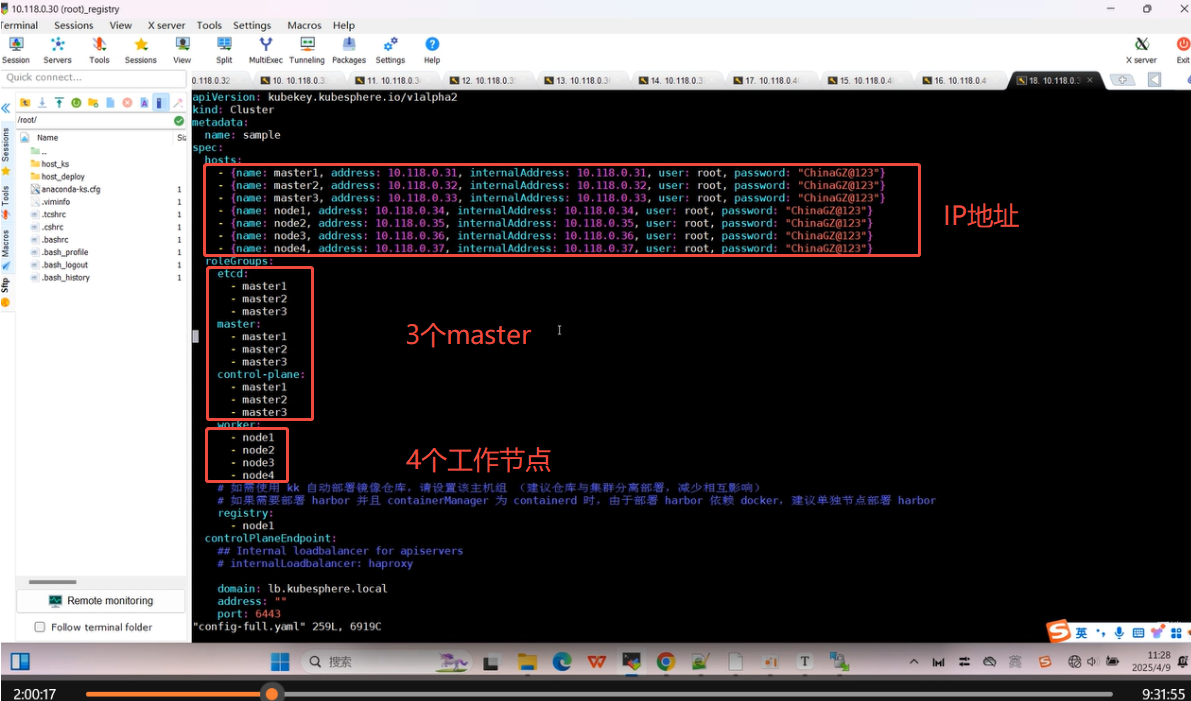

# 配置目标机器的连接信息, 镜像仓库地址

vim config-full.yaml

其中 master1, master2, master3 作为master 节点; node1, node2, node3, node4 作为工作节点

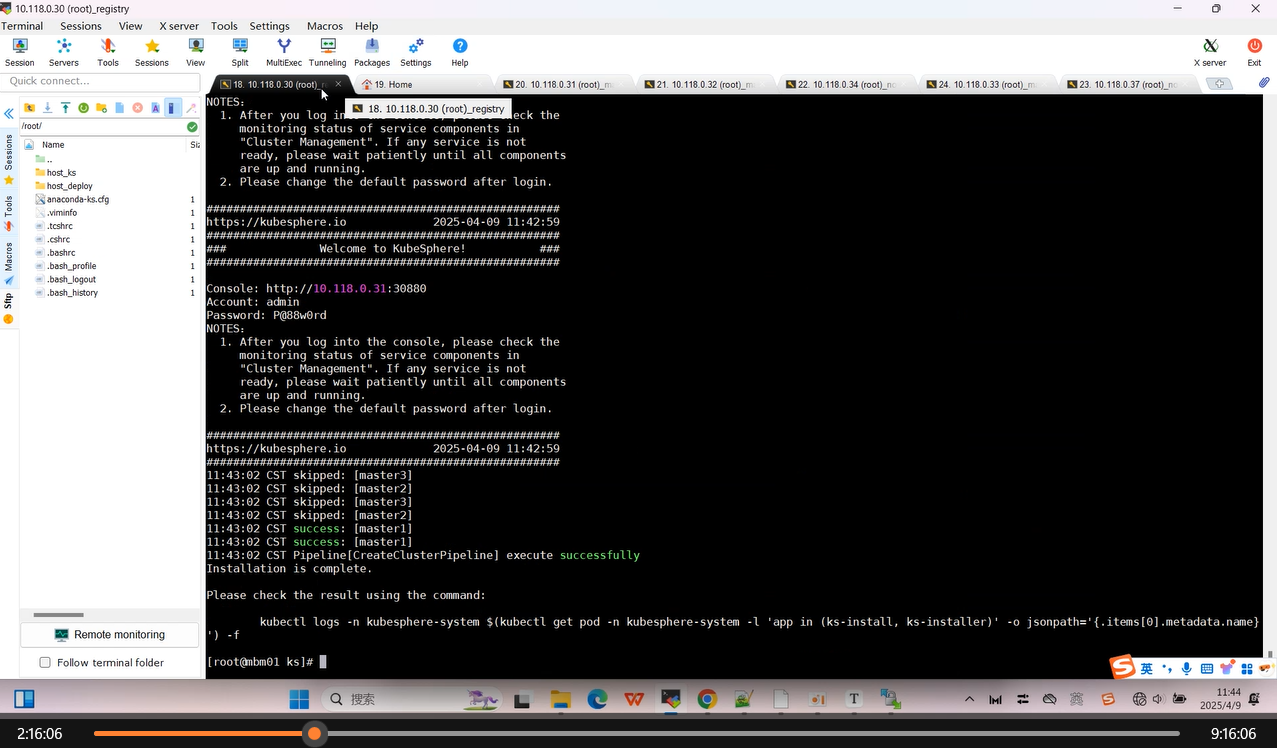

**安装k8s集群: **

./kk create cluster -f config-full.yaml -a kubesphere-full.tar.gz --with-local-storage

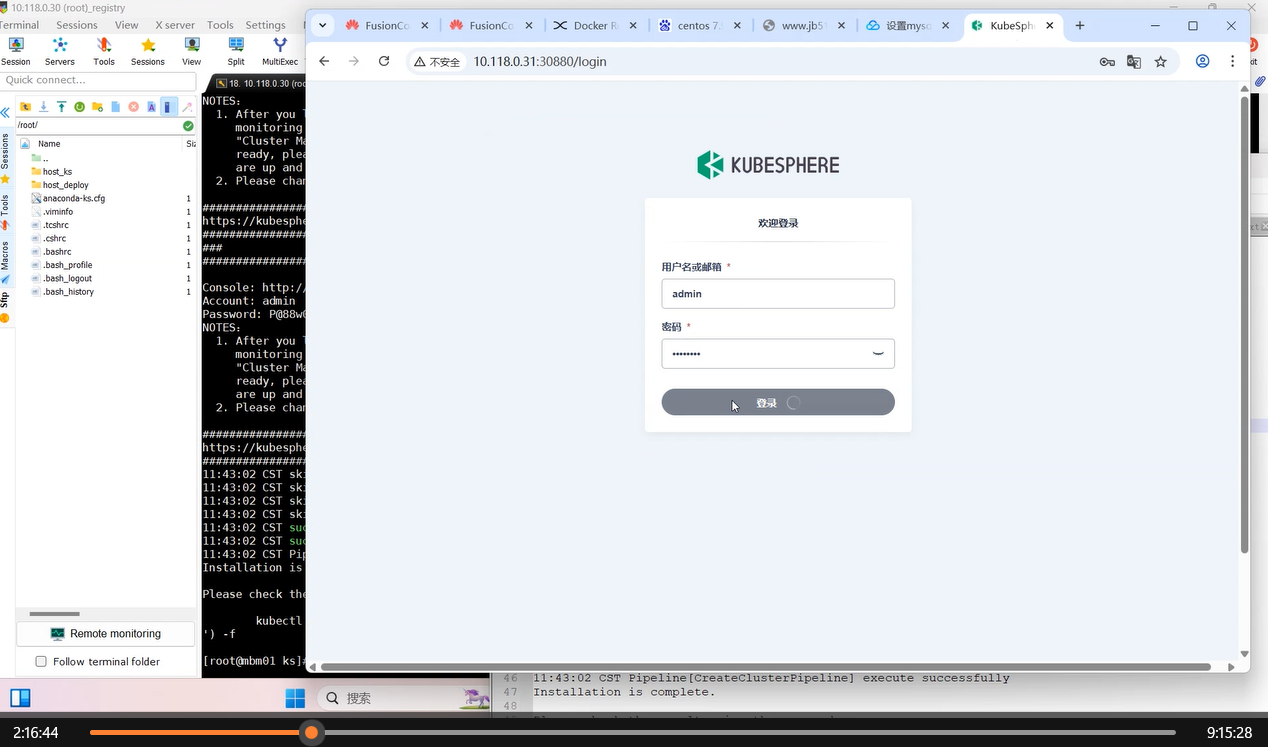

安装完毕后显示kubesphere 的登录地址跟账号密码, 使用显示的账号登录kubesphere 后台。

PS: 这里缺失了 redis:5.0.14-alpine 镜像,请见"常见问题处理"

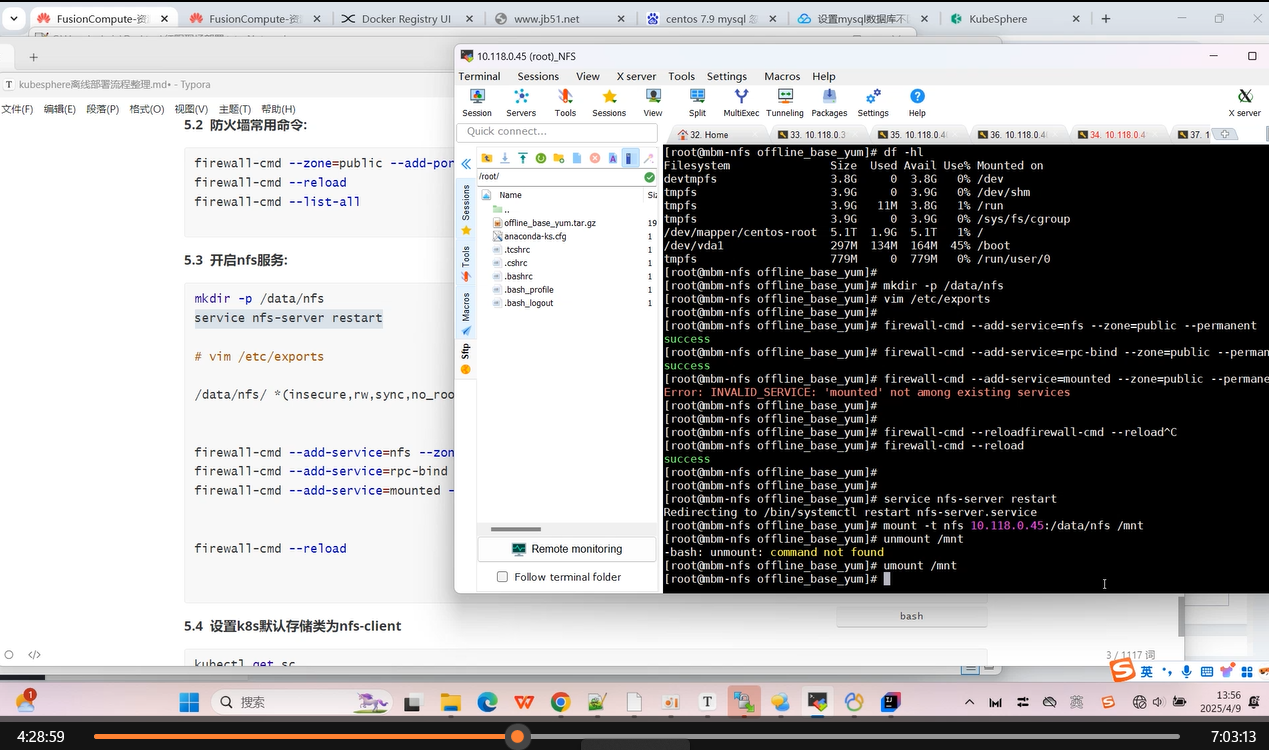

2. 安装NFS服务(在NFS, IP:10.118.0.45 上执行)

安装基础软件包:

# 安装基础rpm包(vim, nfs)

yum localinstall -y offline_base_yum/*.rpm

2.1 开启nfs服务:

mkdir -p /data/nfs

service nfs-server restart

systemctl enable nfs-server

# vim /etc/exports

/data/nfs/ *(insecure,rw,sync,no_root_squash)

# 防火墙排除nfs服务

firewall-cmd --add-service=nfs --zone=public --permanent

firewall-cmd --add-service=rpc-bind --zone=public --permanent

firewall-cmd --reload

2.2 验证nfs服务是否可用:

# 找其中的一台node1 节点执行以下指令, 不报错代表nfs服务能成功连接

mount -t nfs 10.118.0.45:/data/nfs /mnt

3.1 安装mysql1服务(在mysql1, IP:10.118.0.40 上执行)

3.1.1 安装mysql

# 安装基础rpm包(vim, nfs)

yum localinstall -y offline_base_yum/*.rpm

# 安装mysql

yum localinstall -y offline_mysql_yum/*.rpm

3.1.2 配置mysql

vim /etc/my.cnf

# 配置忽略大小写

[mysqld]

lower_case_table_names=1

systemctl start mysqld

systemctl enable mysqld

grep 'temporary password' /var/log/mysqld.log

# 进入mysql

mysql -u root -p

ALTER USER 'root'@'localhost' IDENTIFIED BY '123.nhdTaVMSAC';

set global validate_password.policy=0;

ALTER USER 'root'@'localhost' IDENTIFIED BY 'nhdTaVMSAC';

create user 'root'@'%' IDENTIFIED WITH mysql_native_password BY 'nhdTaVMSAC';

# 配置远程连接

# ALTER USER 'root'@'%' IDENTIFIED BY 'abcabC!9090';

GRANT ALL PRIVILEGES ON *.* TO 'root'@'%';

FLUSH PRIVILEGES;

3.1.3 防火墙排除mysql服务

firewall-cmd --zone=public --add-port=3306/tcp --permanent

firewall-cmd --reload

firewall-cmd --list-all

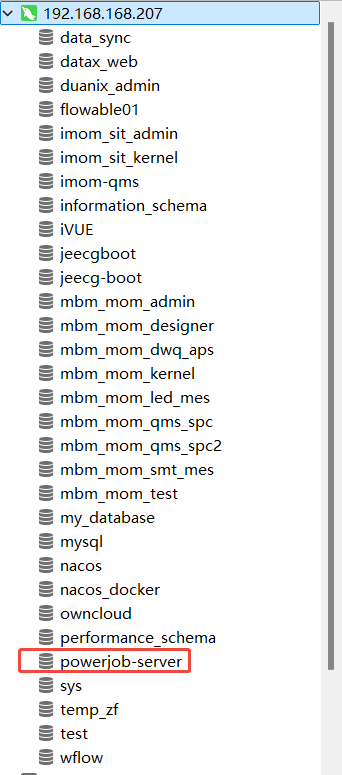

3.1.4 创建业务数据库

CREATE DATABASE `powerjob-server` CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci;

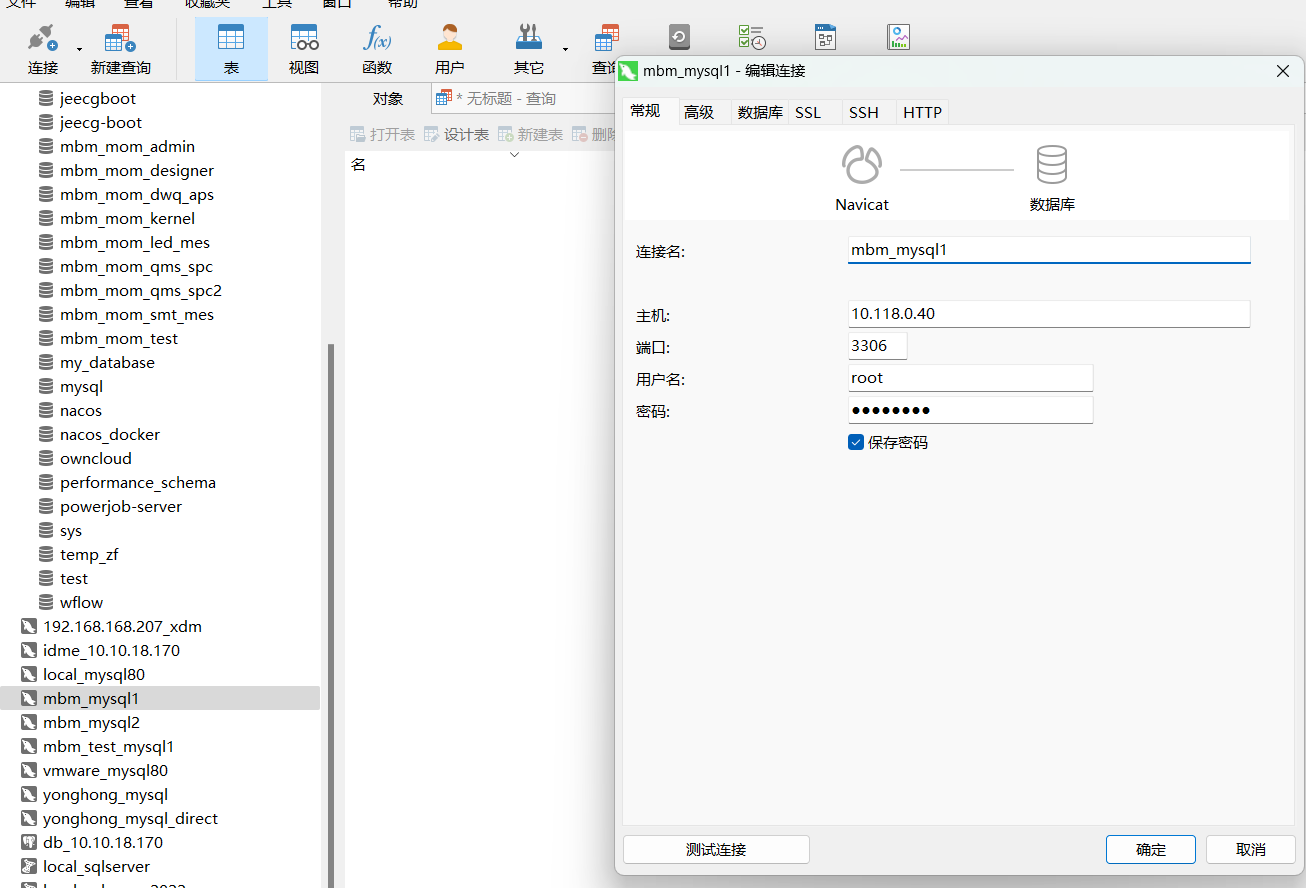

3.1.5 配置navicat连接数据库

3.2 安装mysql2服务(在mysql2, IP:10.118.0.41 上执行)

3.2.1 安装mysql

# 安装基础rpm包(vim, nfs)

yum localinstall -y offline_base_yum/*.rpm

# 安装mysql

yum localinstall -y offline_mysql_yum/*.rpm

3.2.2 配置mysql

这里使用华为指导的mysql 配置

vim /etc/my.cnf

# 配置忽略大小写

[mysqld]

#虚拟机搭建一定要挂载单独数据盘,不要用系统盘,要检查数据盘是否有初始化和挂载,可以扩容

datadir=使用默认配置, 无需更改

socket=使用默认配置, 无需更改

log-error=使用默认配置, 无需更改

pid-file=使用默认配置, 无需更改

skip-name-resolve

explicit_defaults_for_timestamp

max_allowed_packet=16M

character-set-server=UTF8

slow_query_log=1

long_query_time=3.0

#最大连接数,默认151,最大设置3000

max_connections=1000

interactive_timeout=60

lower_case_table_names参数详解:

#0区分大小写,

#1不区分大小写 MySQL在Linux下数据库名、表名、列名、别名大小写规则是这样的:

# 1)数据库名与表名是严格区分大小写的;

# 2)表的别名是严格区分大小写的;

# 3)列名与列的别名在所有的情况下均是忽略大小写的;

# 4)变量名也是严格区分大小写的;

lower_case_table_names=1

#sql_mode ONLY_FULL_GROUP_BY要删除这个,配置文件中修改

sql_mode=IGNORE_SPACE,STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_ENGINE_SUBSTITUTION

**设置 mysql 密码, 远程连接: **

systemctl start mysqld

systemctl enable mysqld

grep 'temporary password' /var/log/mysqld.log

# 进入mysql

mysql -u root -p

ALTER USER 'root'@'localhost' IDENTIFIED BY '123.nhdTaVMSAC';

set global validate_password.policy=0;

ALTER USER 'root'@'localhost' IDENTIFIED BY 'nhdTaVMSAC';

create user 'root'@'%' IDENTIFIED WITH mysql_native_password BY 'nhdTaVMSAC';

# 配置远程连接

# ALTER USER 'root'@'%' IDENTIFIED BY 'abcabC!9090';

GRANT ALL PRIVILEGES ON *.* TO 'root'@'%';

FLUSH PRIVILEGES;

3.2.3 防火墙排除mysql服务

firewall-cmd --zone=public --add-port=3306/tcp --permanent

firewall-cmd --reload

firewall-cmd --list-all

3.2.4 创建业务数据库

CREATE DATABASE `powerjob-server` CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci;

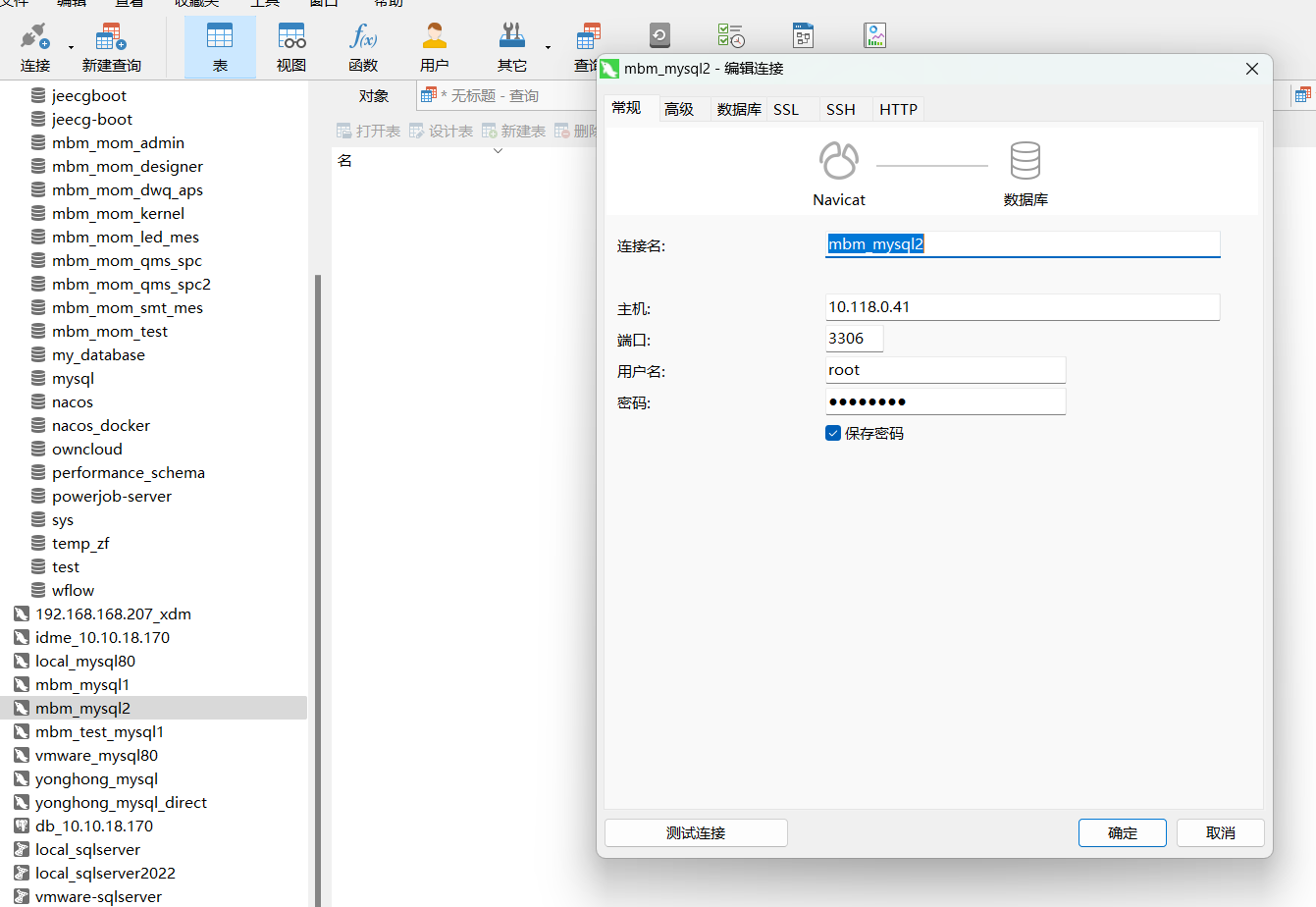

3.2.5 配置navicat连接数据库

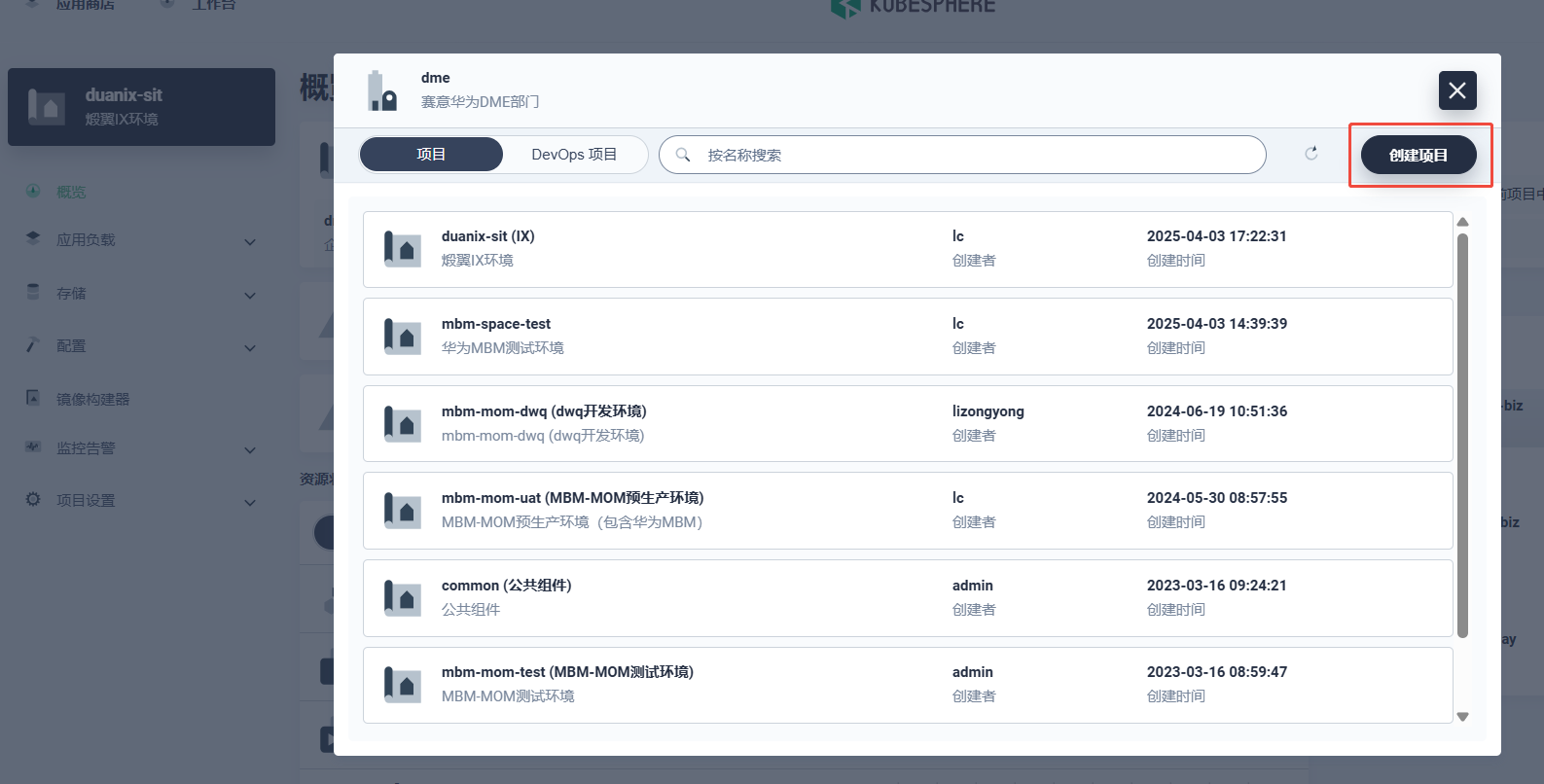

4. 安装imom依赖的中间件(在master1, IP:10.118.0.31 上执行)

前提: 在kubesphere 上创建 common, imom, mdm 这三个命名空间

2.1 准备相关制品包

tar -xvf helm_tgz.tar.gz

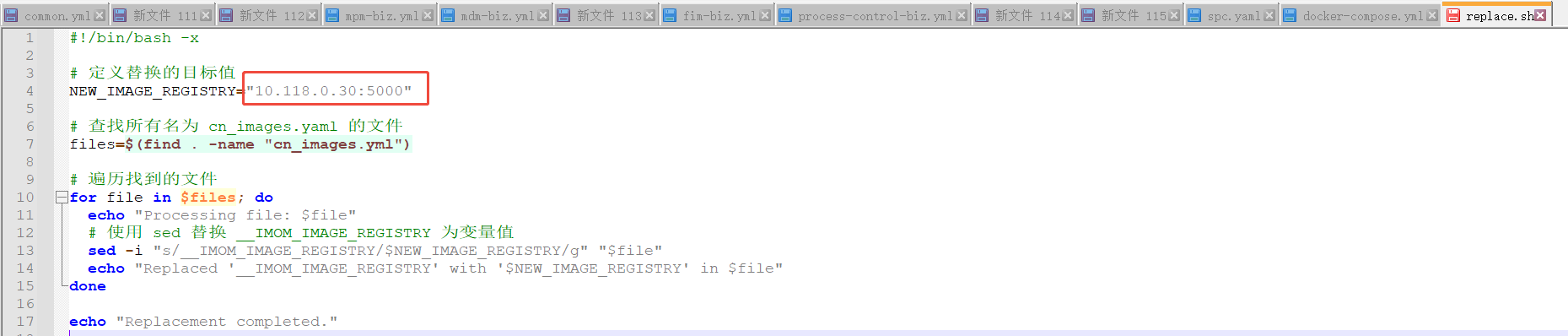

将NEW_IMAGE_REGISTRY 改为本地镜像仓库地址: 10.118.0.30:5000

对所有cn_images.yml 更改镜像仓库地址:

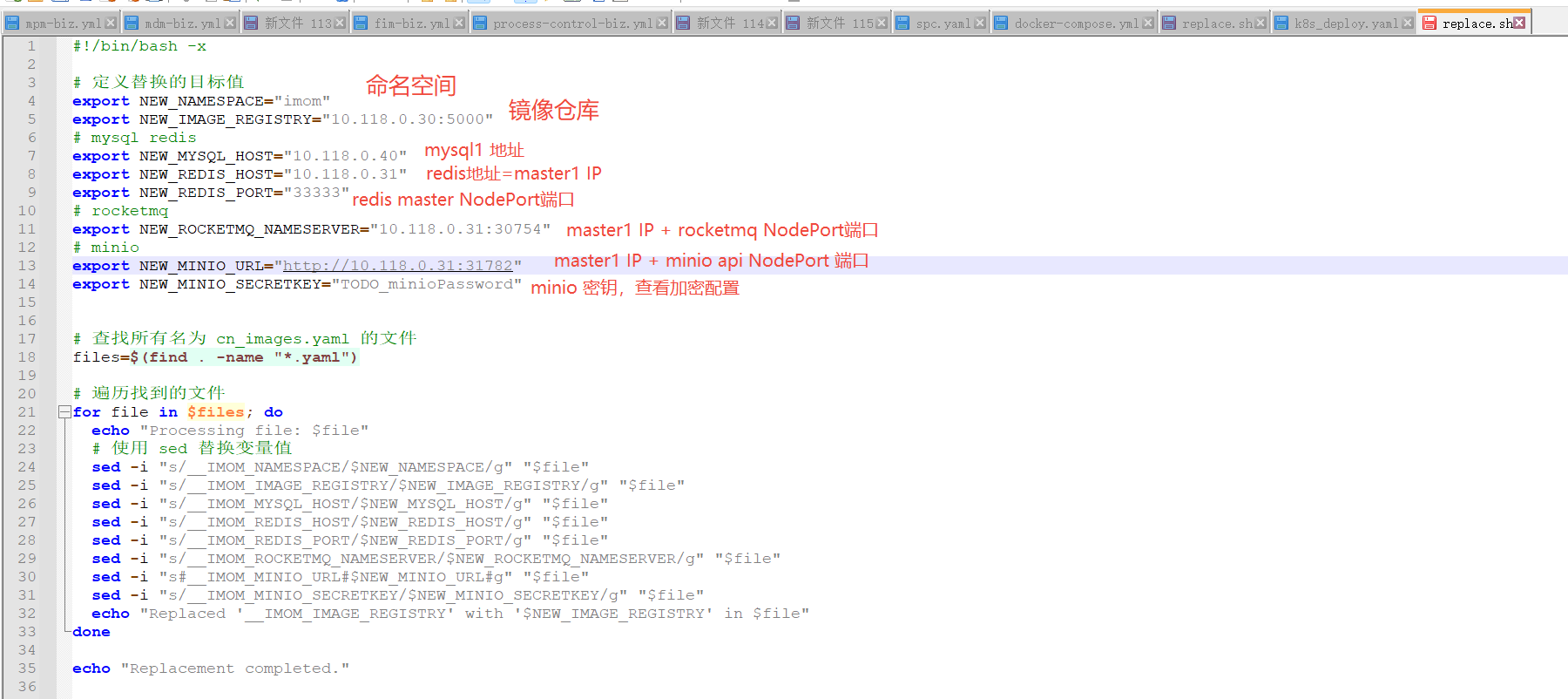

# 修改 replace.sh 文件内的配置,执行 ./replace.sh 替换占位符

./replace.sh

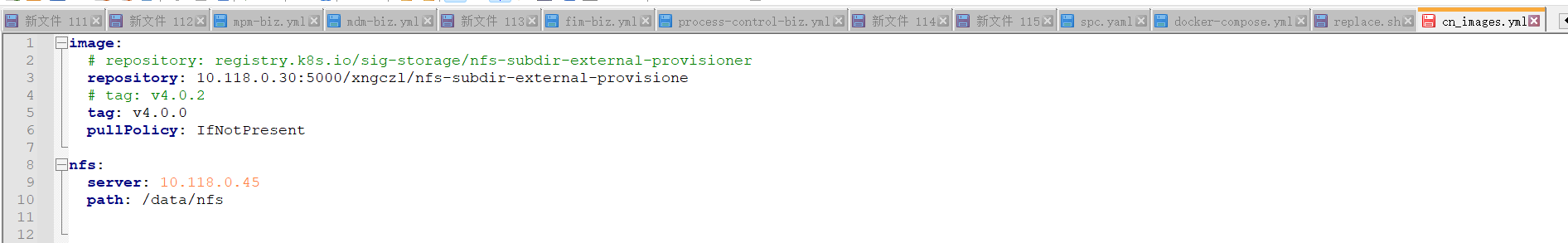

2.2 安装nfs provisione

前置条件: 10.118.0.45上已安装好nfs服务

修改 cn_images.yml, 更改nfs server 的IP地址为10.118.0.45

# 目标服务器安装好nfs-server

# https://blog.csdn.net/xixihahalelehehe/article/details/131756156

# 配置目标服务器ip与访问密码

cd nfs

helm install nfs-subdir-external-provisioner ./nfs-subdir-external-provisioner-4.0.18.tgz -f cn_images.yml

将默认存储类更改为nfs-client

# 以下在master1上执行

kubectl patch storageclass local -p '{"metadata": {"annotations": {"storageclass.beta.kubernetes.io/is-default-class": null}}}'

kubectl patch storageclass nfs-client -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

2.3 安装redis

cd redis

# 单例模式

helm install redis-broker ./redis-19.6.4.tgz -f cn_images.yml -n common

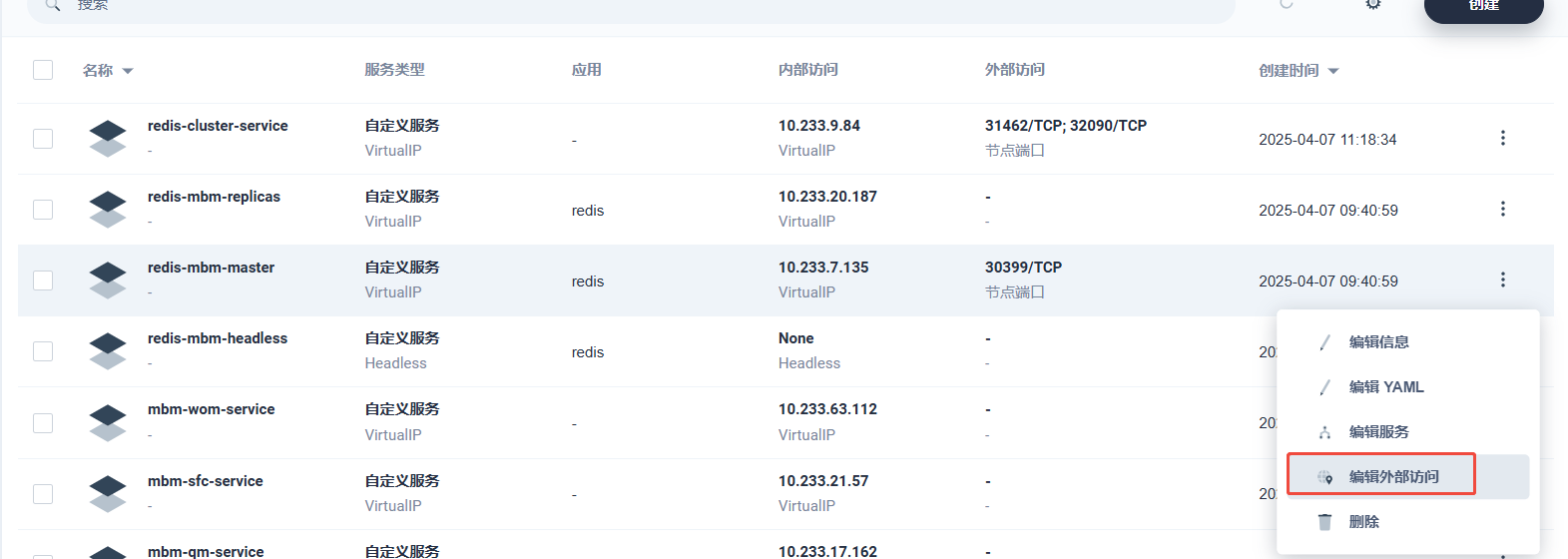

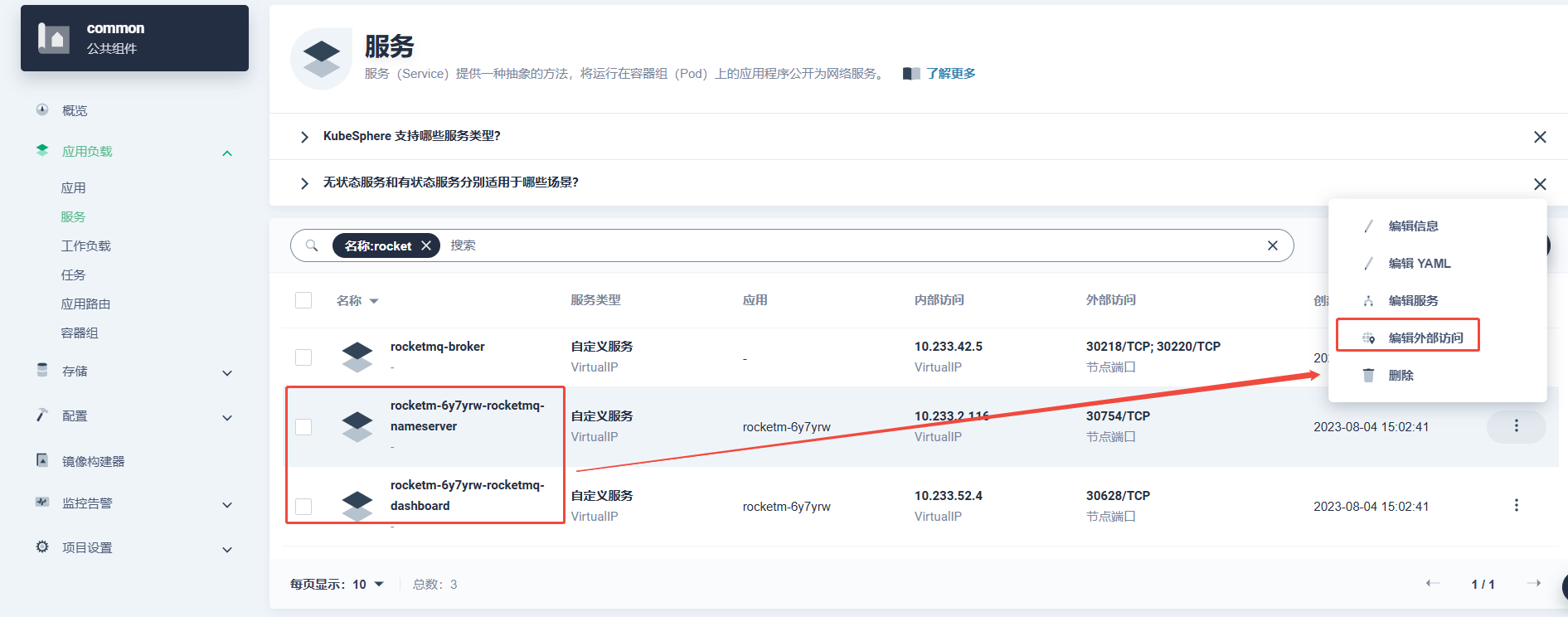

给redis-master开启NodePort方式访问, 记录NodePort端口

2.4 安装minio

cd minio

# 单例模式

helm install minio-broker ./minio-14.6.32.tgz -f cn_images.yml -n common

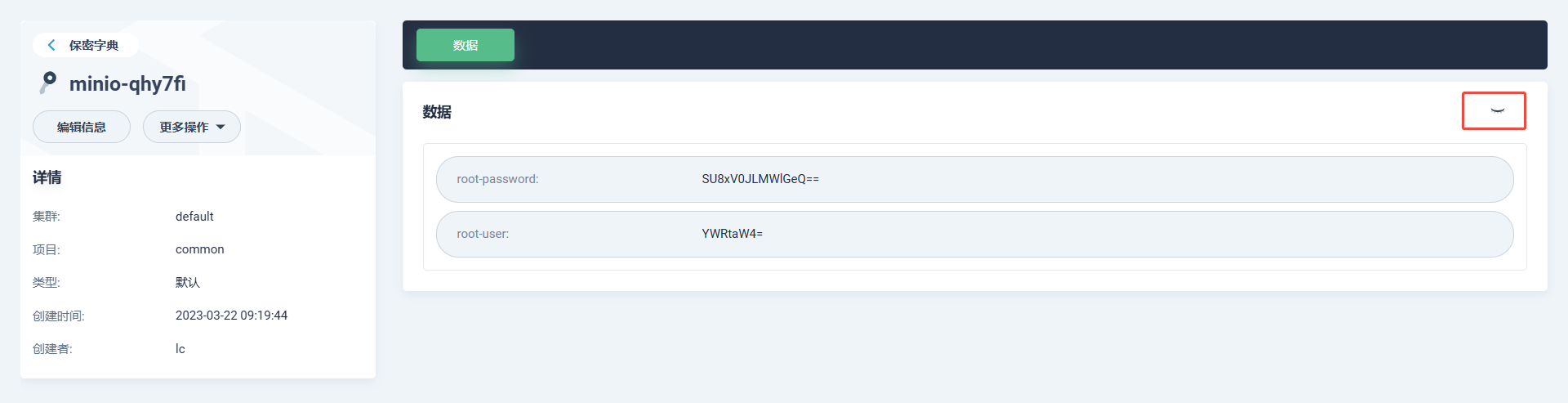

# 管理台的密码 查看加密配置

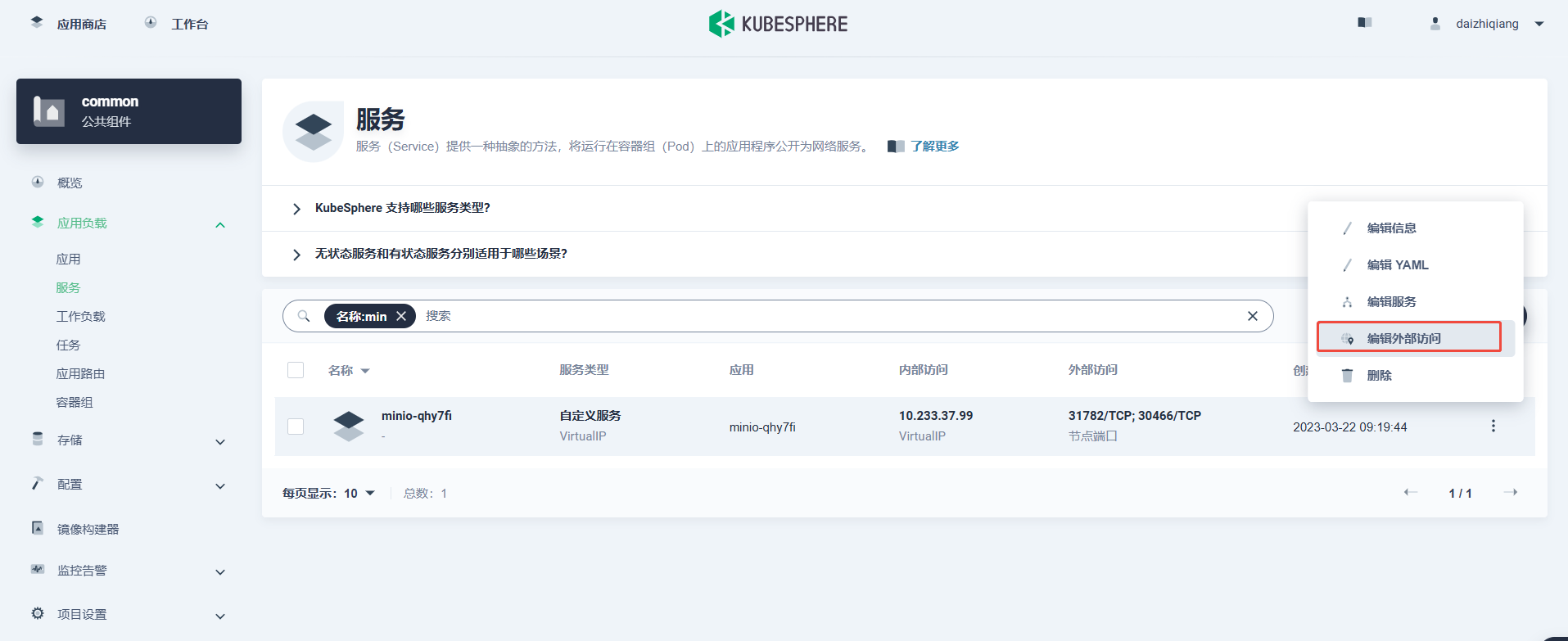

同理,开启NodePort方式访问, 记录NodePort端口

在加密字典上查看minio的账号信息:

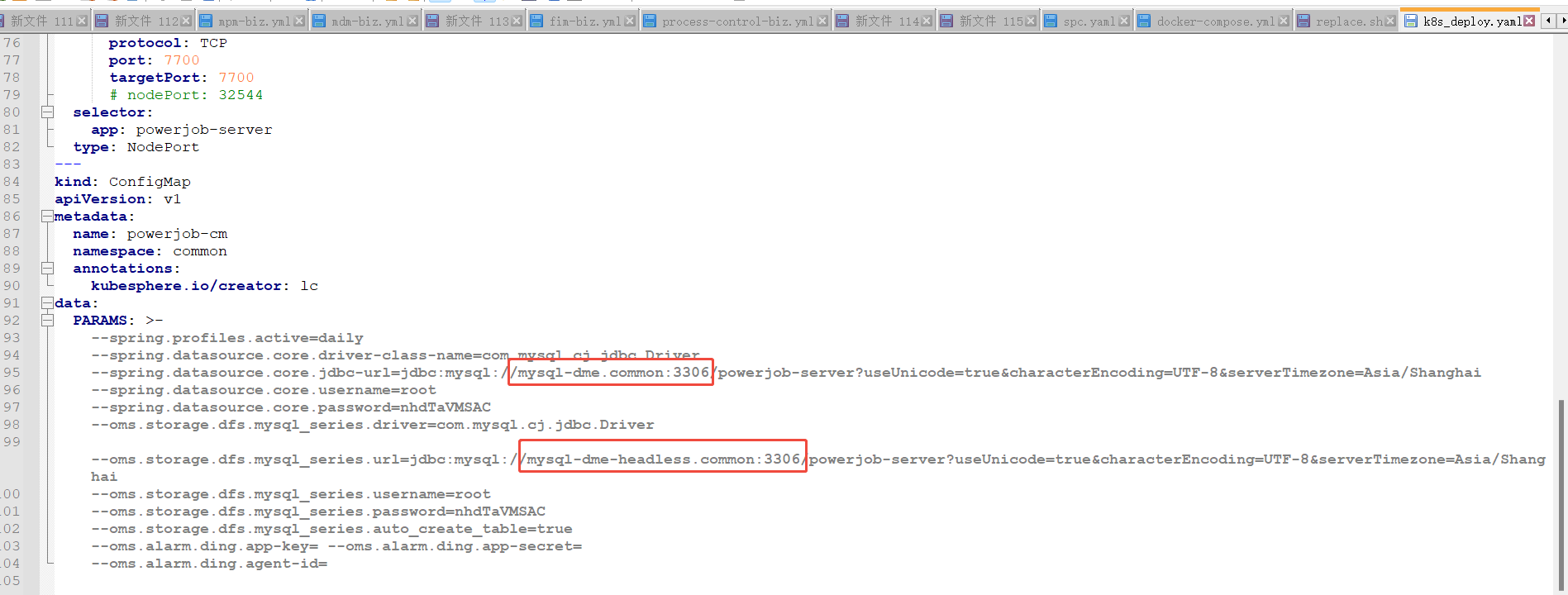

2.5 安装powerjob

使用navicat 等工具在mysql1 上创建好 powerjob-server 数据库。

修改 powerjob/k8s_deploy.yaml 文件,更改以下mysql连接信息:

创建powerjob负载

kubectl apply -f powerjob/k8s_deploy.yaml

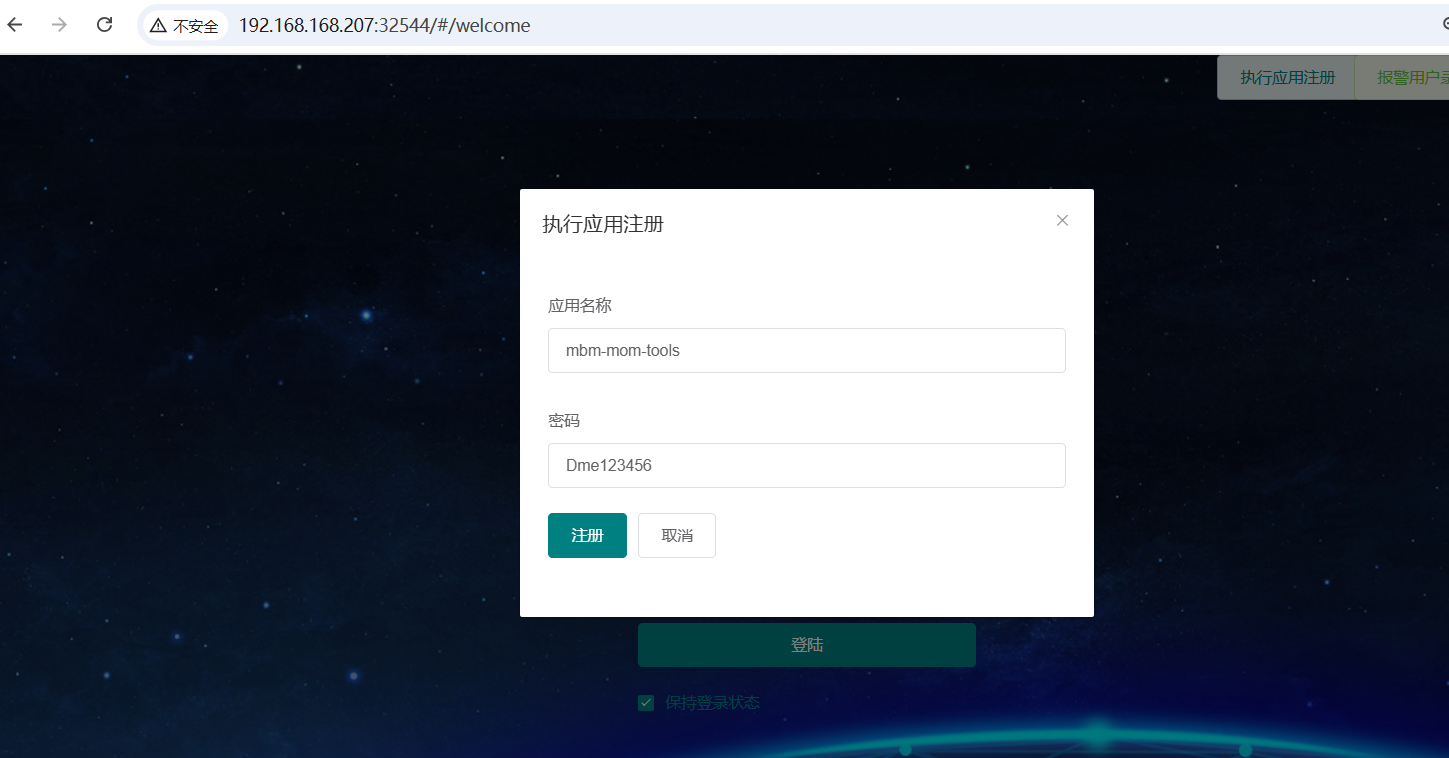

在powerjob 控制台注册mbm-mom-tools, mbm-mom-smt 两个应用

2.6 安装rocketmq

# 参考: https://blog.csdn.net/silentcoo1/article/details/142376335

# 单例模式

helm install rocketmq-broker ./rocketmq-12.0.2.tgz -f cn_images.yml -n common

# 禁用proxy模式, 安装完集群后,手工开启NodePort

# 管理台的密码是 admin/admin

安装完后,将rocketmq-nameserver, rocketmq-dashboard 都开启NodePort方式访问, 记录 rocketmq-nameserver 的NodePort端口

4. imom安装

4.1 修改 replace.sh 文件内的配置

4.2 创建配置, Deployment:

# 执行 ./replace.sh 替换占位符

./replace.sh

kubectl apply -f ui-conf.yaml

kubectl apply -f biz-conf.yaml

kubectl apply -f imom-deployments.yaml

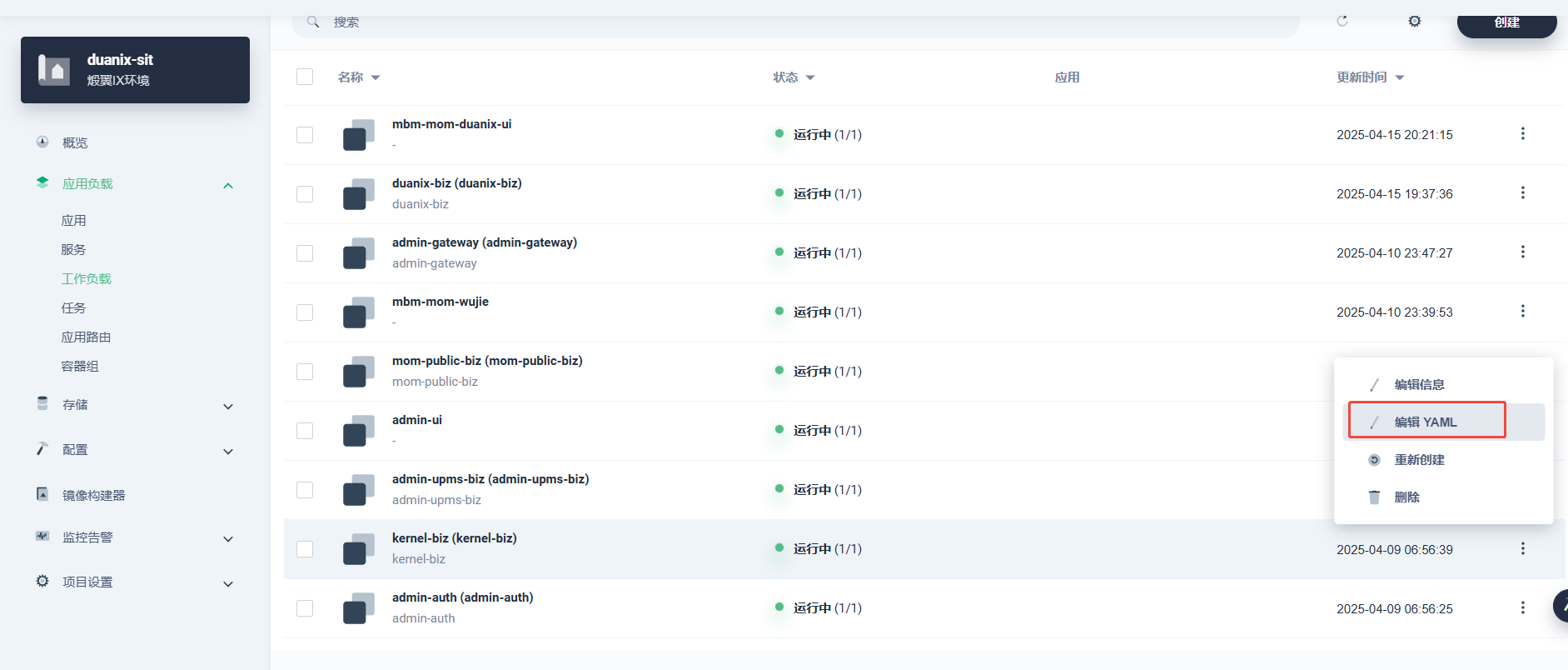

4.3 观察Deployment状态是否正常可用

在kubesphere 观察Deployment状态, 如果启动不正常,修改YAML文件来和对应的ConfigMap来修复

- 华为mdm安装

以下所有的操作, 都在k8s 的 mdm 命名空间

5.1 创建业务数据库

**使用navicat 连接mysql2 数据库, 执行以下查询创建数据库, 字符集一定要选择 utf8mb4_0900_ai_ci: **

CREATE DATABASE `msm` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `mdm` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `wom` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `sfc` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `em` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `qm` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `label` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `les` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

CREATE DATABASE `designer` CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci;

5.1 redis中间件安装

在一台外网机器上使用 docker save docker.m.daocloud.io/redis:latest -o redis_latest.tar 把redis下载下来.

导入到本地镜像仓库上(部署机器上执行):

# 导入镜像

docker load -i redis_latest.tar

# 打tag

docker tag docker.m.daocloud.io/redis:latest 10.118.0.30:5000/redis:latest

# 推送到本地仓库

docker push 10.118.0.30:5000/redis:latest

vim redis_cluster.yaml

kind: StatefulSet

apiVersion: apps/v1

metadata:

name: redis-cluster

namespace: mbm

spec:

replicas: 6

selector:

matchLabels:

app: redis-cluster

template:

metadata:

labels:

app: redis-cluster

spec:

volumes:

- name: redis-conf

configMap:

name: redis-cluster-config

items:

- key: redis.conf

path: redis.conf

containers:

- name: redis

image: 10.118.0.30:5000/redis:latest

command:

- redis-server

args:

- /etc/redis/redis.conf

- '--protected-mode'

- 'no'

ports:

- name: redis

containerPort: 6379

protocol: TCP

- name: cluster

containerPort: 16379

protocol: TCP

resources:

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: redis-data

mountPath: /data

- name: redis-conf

mountPath: /etc/redis

imagePullPolicy: IfNotPresent

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- redis-cluster

topologyKey: kubernetes.io/hostname

volumeClaimTemplates:

- kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: redis-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

storageClassName: nfs-client

volumeMode: Filesystem

status:

phase: Pending

serviceName: redis-cluster-service

podManagementPolicy: OrderedReady

updateStrategy:

type: RollingUpdate

rollingUpdate:

partition: 0

revisionHistoryLimit: 10

---

apiVersion: v1

kind: Service

metadata:

name: redis-cluster-service

namespace: mbm

labels:

app: redis-cluster

spec:

ports:

- name: redis-port

protocol: TCP

port: 6379

targetPort: 6379

selector:

app: redis-cluster

clusterIP: None

clusterIPs:

- None

type: ClusterIP

sessionAffinity: None

ipFamilies:

- IPv4

ipFamilyPolicy: SingleStack

internalTrafficPolicy: Cluster

---

kind: ConfigMap

apiVersion: v1

metadata:

name: redis-single-config

namespace: mbm

annotations:

currentVersion: '1'

description: ''

kubesphere.io/creator: daizhiqiang

originName: redis-single-config

data:

redis.conf: |-

#daemonize yes

pidfile /data/redis.pid

port 6379

tcp-backlog 30000

timeout 0

tcp-keepalive 10

loglevel notice

logfile /data/redis.log

databases 16

#save 900 1

#save 300 10

#save 60 10000

stop-writes-on-bgsave-error no

rdbcompression yes

rdbchecksum yes

dbfilename dump.rdb

dir /data

slave-serve-stale-data yes

slave-read-only yes

repl-diskless-sync no

repl-diskless-sync-delay 5

repl-disable-tcp-nodelay no

slave-priority 100

requirepass Rdis$123456

maxclients 30000

appendonly no

appendfilename "appendonly.aof"

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

lua-time-limit 5000

slowlog-log-slower-than 10000

slowlog-max-len 128

latency-monitor-threshold 0

notify-keyspace-events KEA

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-entries 512

list-max-ziplist-value 64

set-max-intset-entries 1000

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit slave 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

hz 10

---

kind: ConfigMap

apiVersion: v1

metadata:

name: redis-cluster-config

namespace: mbm

annotations:

kubesphere.io/creator: daizhiqiang

data:

redis.conf: |

bind 0.0.0.0

port 6379

appendonly yes

dir /data

cluster-enabled yes

cluster-node-timeout 5000

cluster-announce-bus-port 16379

cluster-config-file /data/nodes.conf

requirepass Rdis123456

masterauth Rdis123456

使用以上的redis_cluster.yaml 文件部署redis集群:

kubectl apply -f redis_cluster.yaml

将6个redis 组成集群:

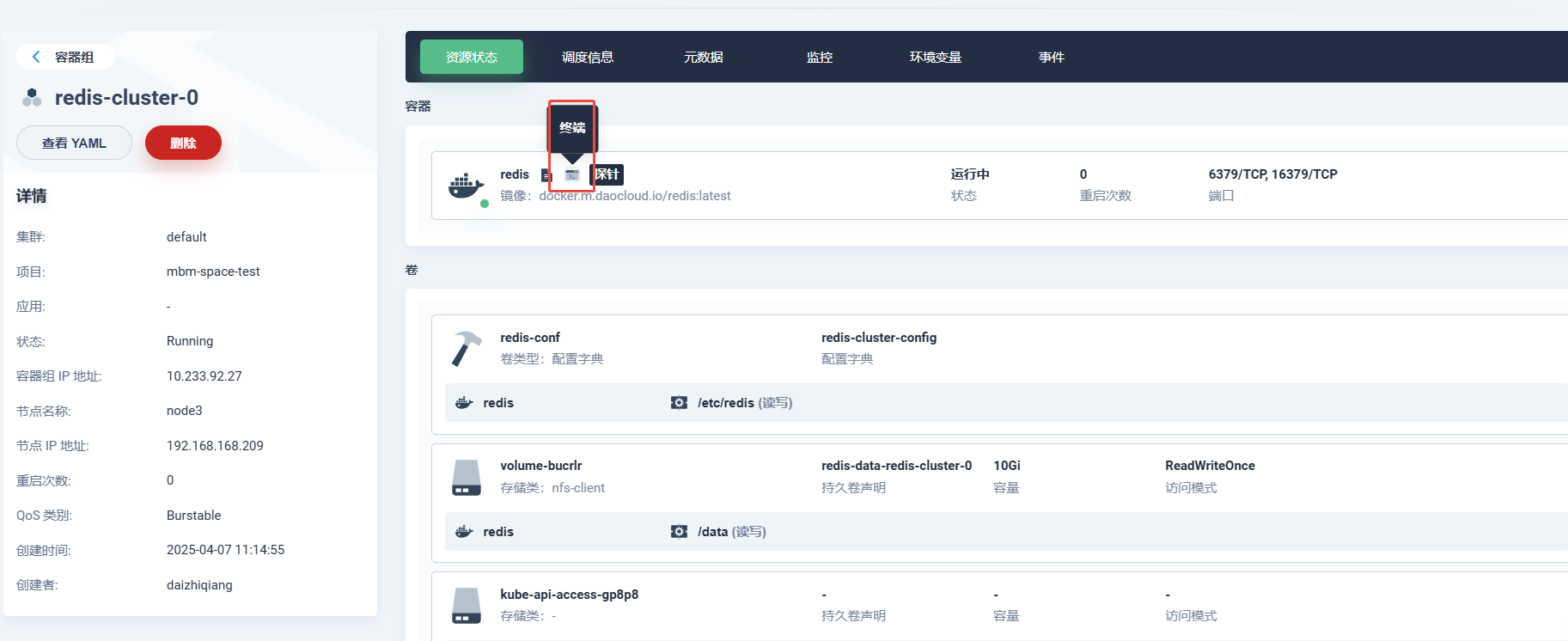

使用kubesphere 进入redis-cluster-0 的终端:

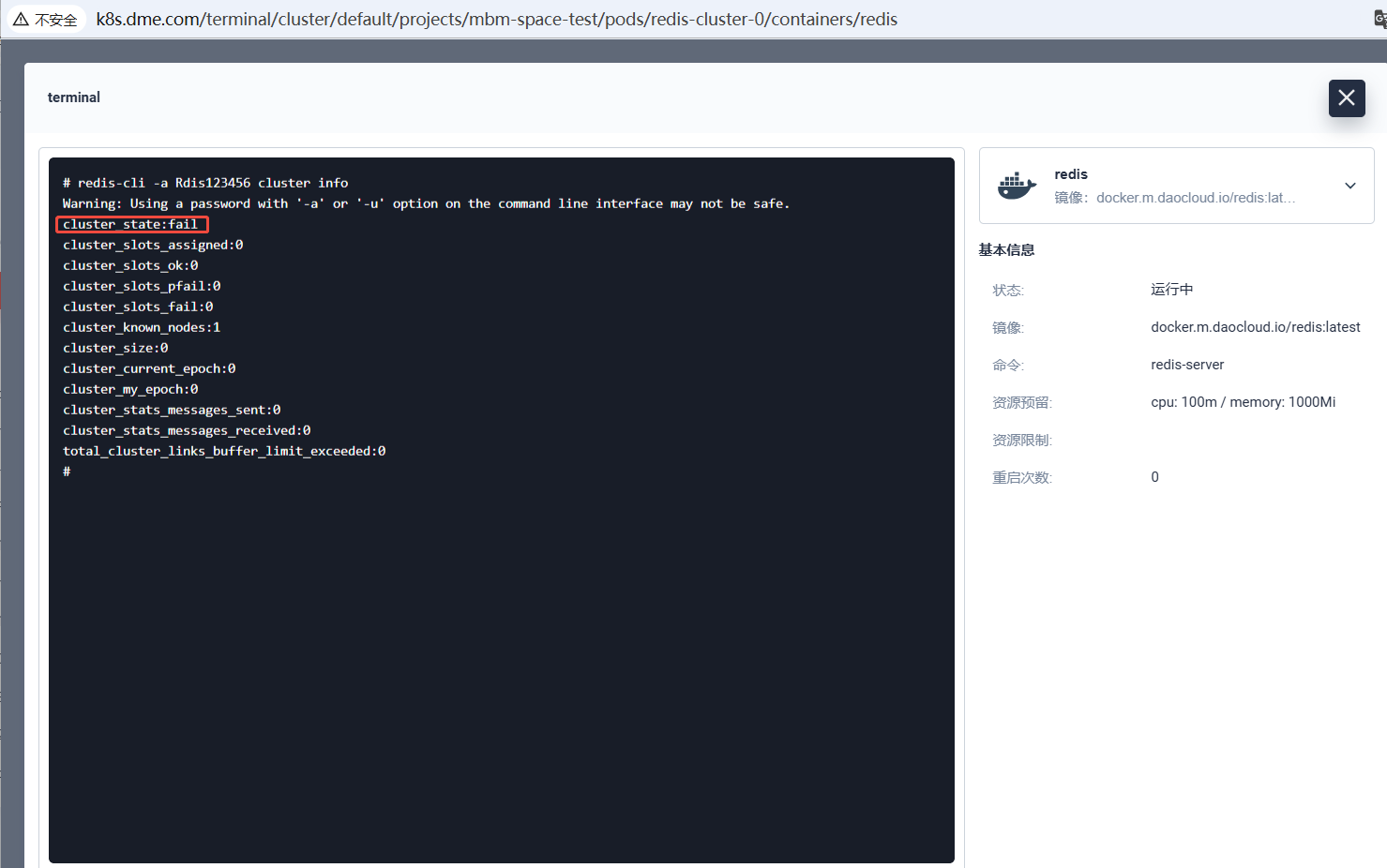

**在终端内查看集群状态, 可见集群未就绪: **

**在终端内执行以下指令创建集群: **

redis-cli -a Rdis123456 --cluster create \

redis-cluster-0.redis-cluster-service.mbm.svc.cluster.local:6379 \

redis-cluster-1.redis-cluster-service.mbm.svc.cluster.local:6379 \

redis-cluster-2.redis-cluster-service.mbm.svc.cluster.local:6379 \

redis-cluster-3.redis-cluster-service.mbm.svc.cluster.local:6379 \

redis-cluster-4.redis-cluster-service.mbm.svc.cluster.local:6379 \

redis-cluster-5.redis-cluster-service.mbm.svc.cluster.local:6379 \

--cluster-replicas 1

测试redis集群是否可用:

redis-cli -a Rdis123456 -c -h redis-cluster-service.mbm.svc.cluster.local -p 6379

5.2 mdm配置修改:

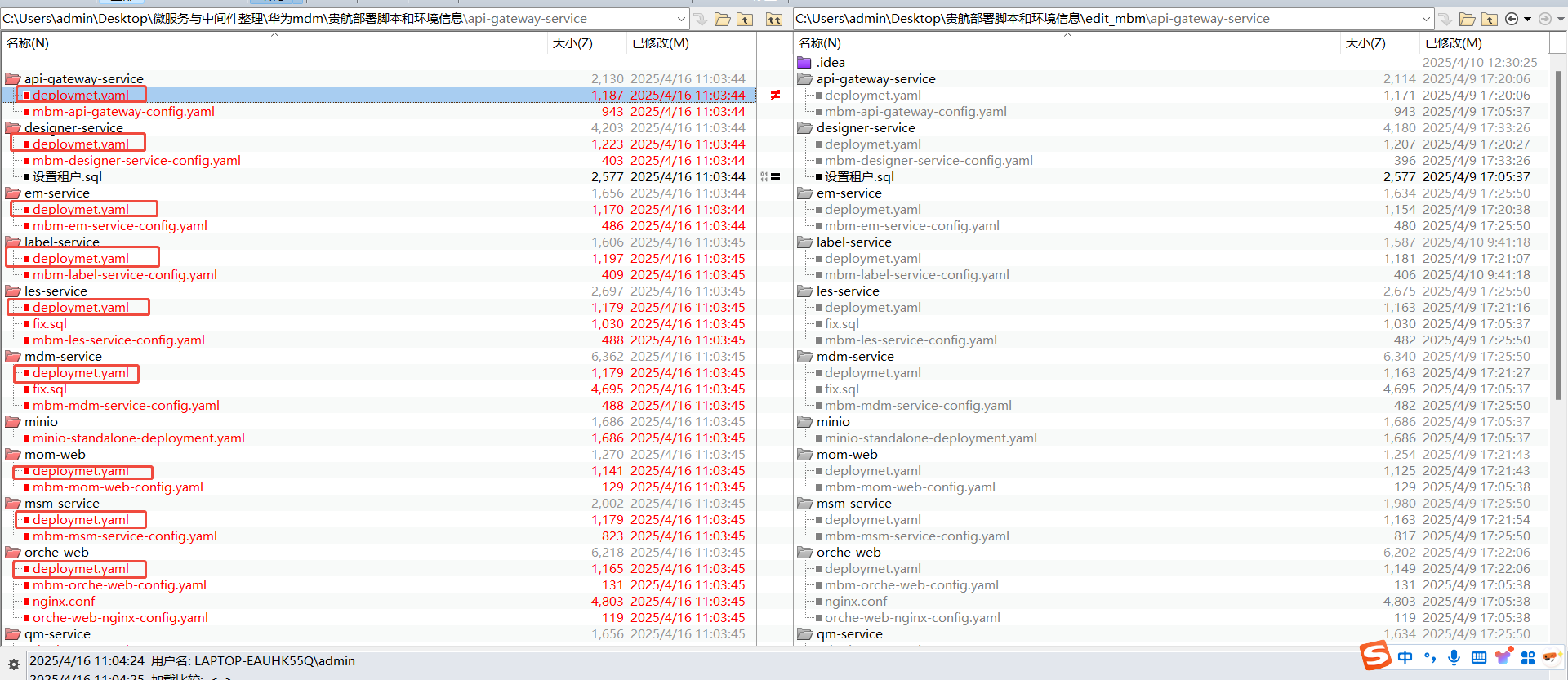

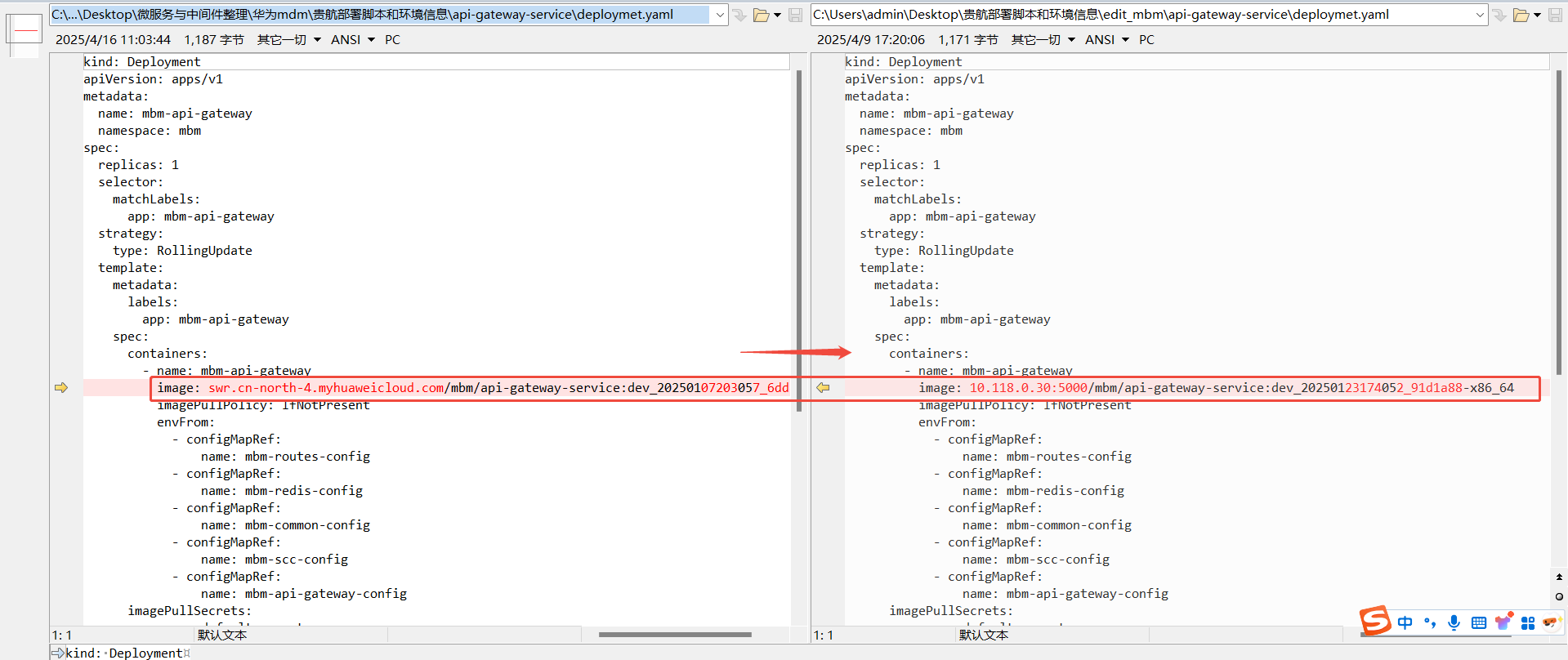

将每个微服务下的Deployment镜像地址都改成导入的镜像地址:

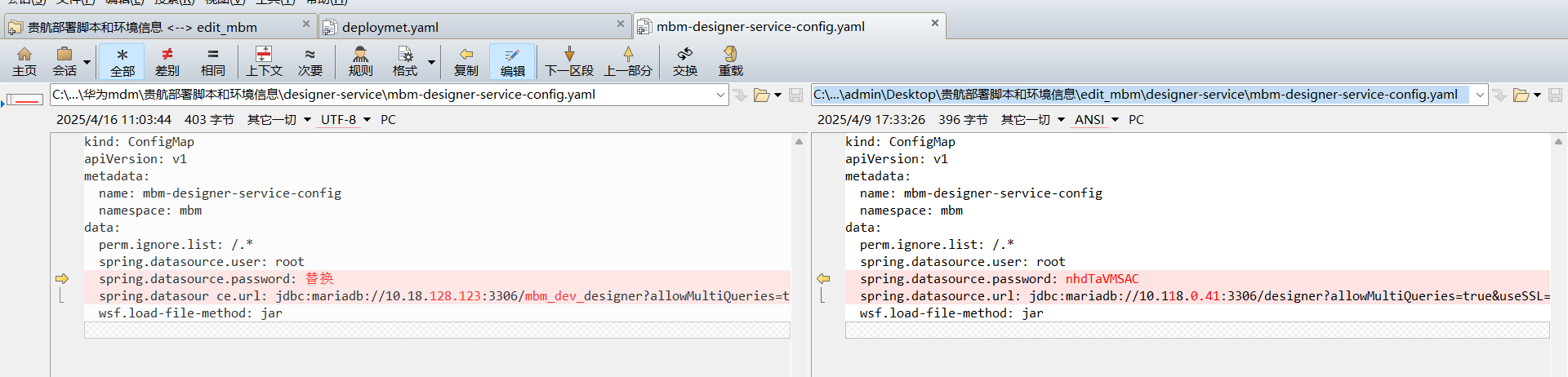

**搜索所有的 jdbc:mariadb 配置, 将 连接信息改成 mysql2的连接信息: **

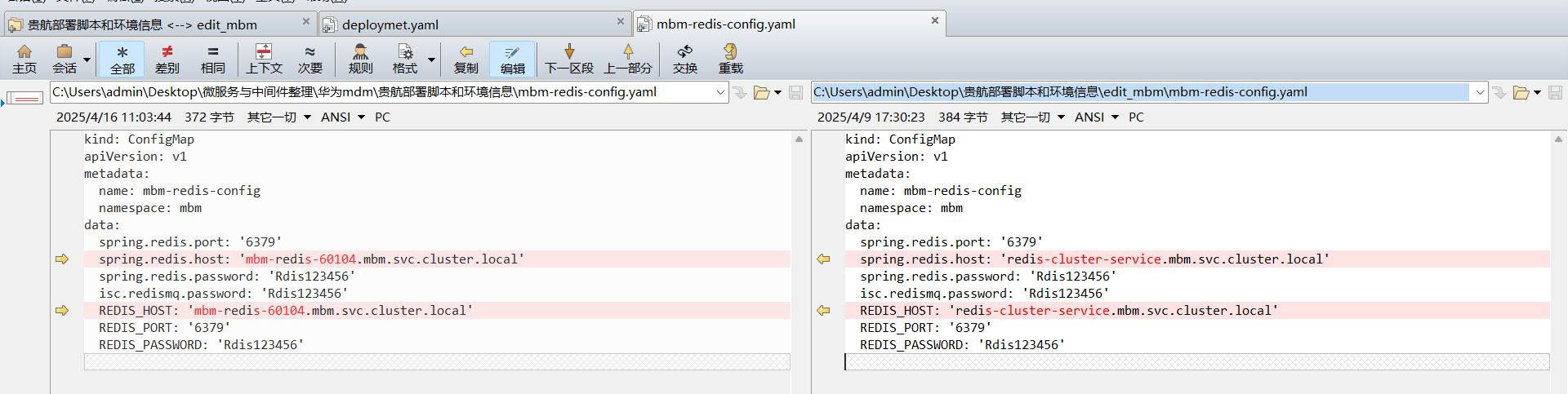

修改mbm-redis-config 里的redis连接信息:

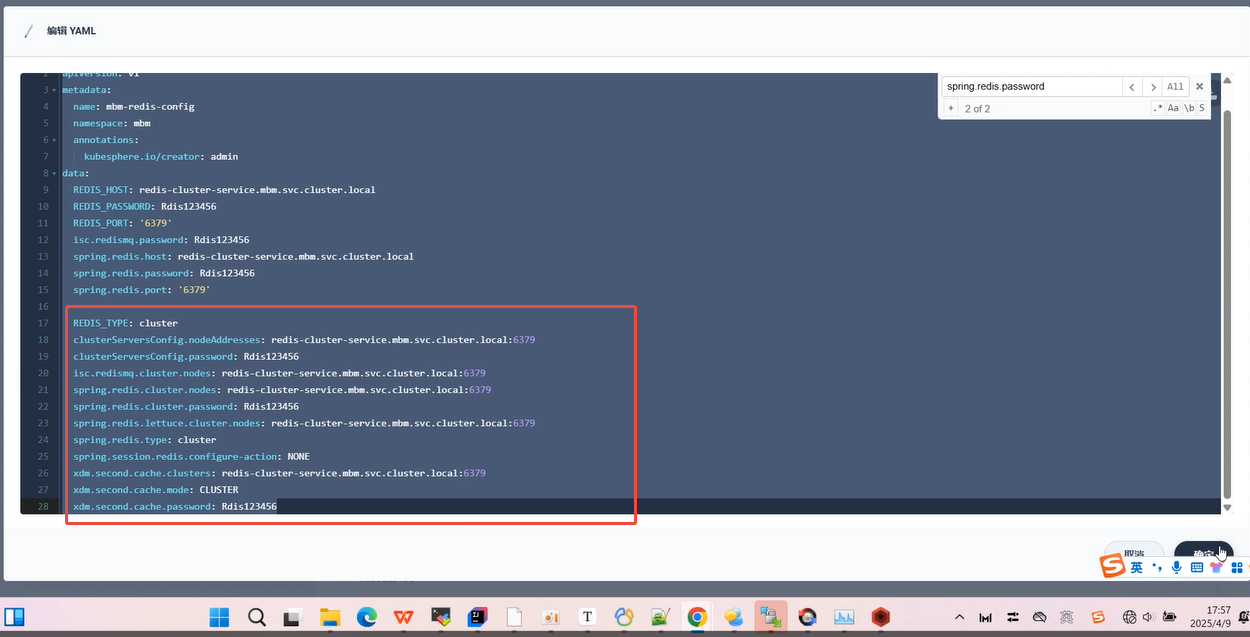

加入以下的redis集群配置, 并删除重复的键值:

REDIS_TYPE: cluster

配置服务地址,可内部转发,需要设置集群,集群成员设置为域名,参考部署yaml中的说明

clusterServersConfig.nodeAddresses: redis-cluster-service.mbm.svc.cluster.local:6379

clusterServersConfig.password: Rdis123456

isc.redismq.cluster.nodes: redis-cluster-service.mbm.svc.cluster.local:6379

isc.redismq.password: Rdis123456

spring.redis.cluster.nodes: redis-cluster-service.mbm.svc.cluster.local:6379

spring.redis.cluster.password: Rdis123456

spring.redis.lettuce.cluster.nodes: redis-cluster-service.mbm.svc.cluster.local:6379

spring.redis.password: Rdis123456

spring.redis.port: '6379'

spring.redis.type: cluster

spring.session.redis.configure-action: NONE

xdm.second.cache.clusters: redis-cluster-service.mbm.svc.cluster.local:6379

xdm.second.cache.mode: CLUSTER

xdm.second.cache.password: Rdis123456

5.3 部署mdm

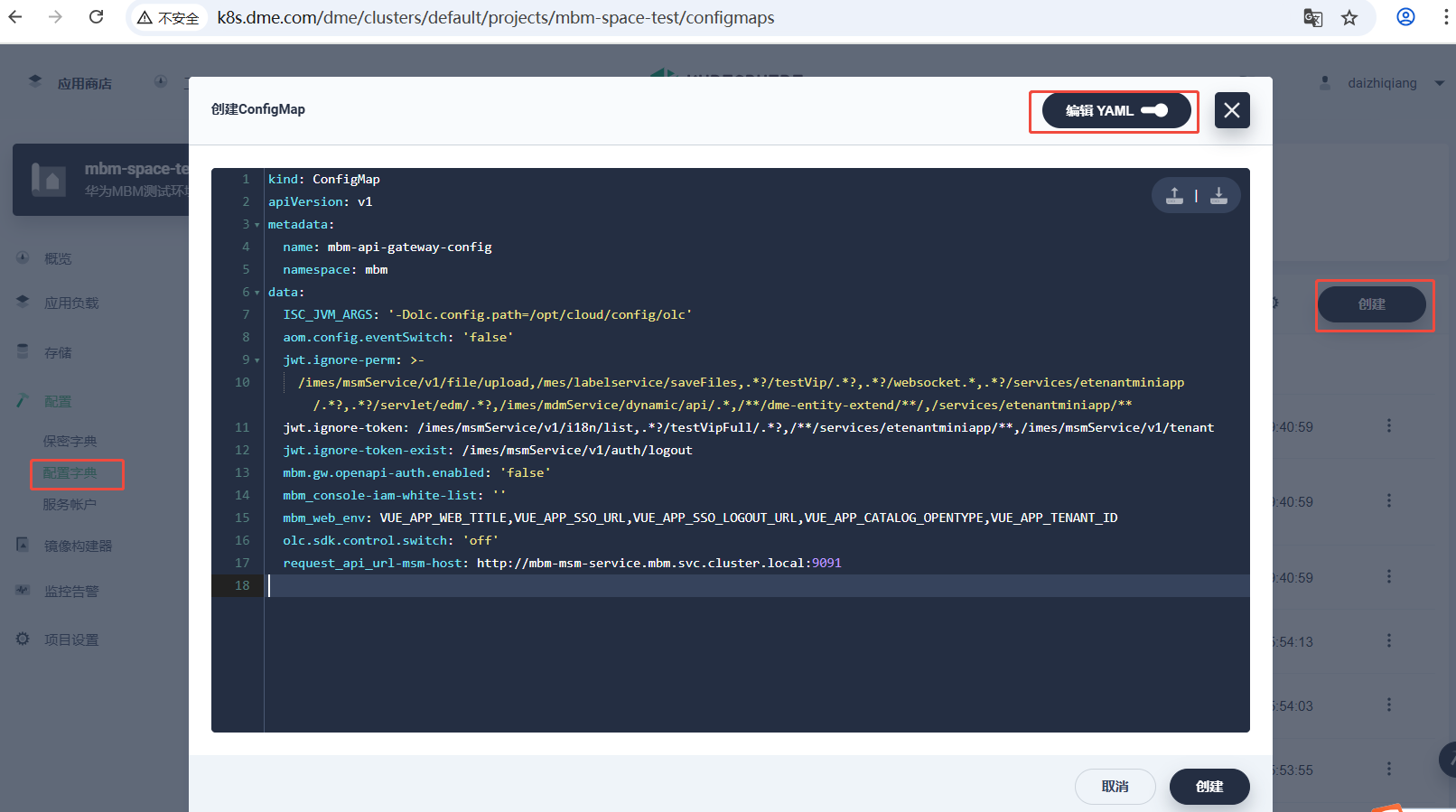

5.3.1 部署mdm相关ConfigMap

依次使用kubesphere 控制台将 api-getway-service, designer-service, em-service, label-service, les-service, mdm-service, mom-web, msm-service, orche-web, qm-service, sfc-service, wom-service 等多个目录下的 xxx-config.yaml 文件进行部署.

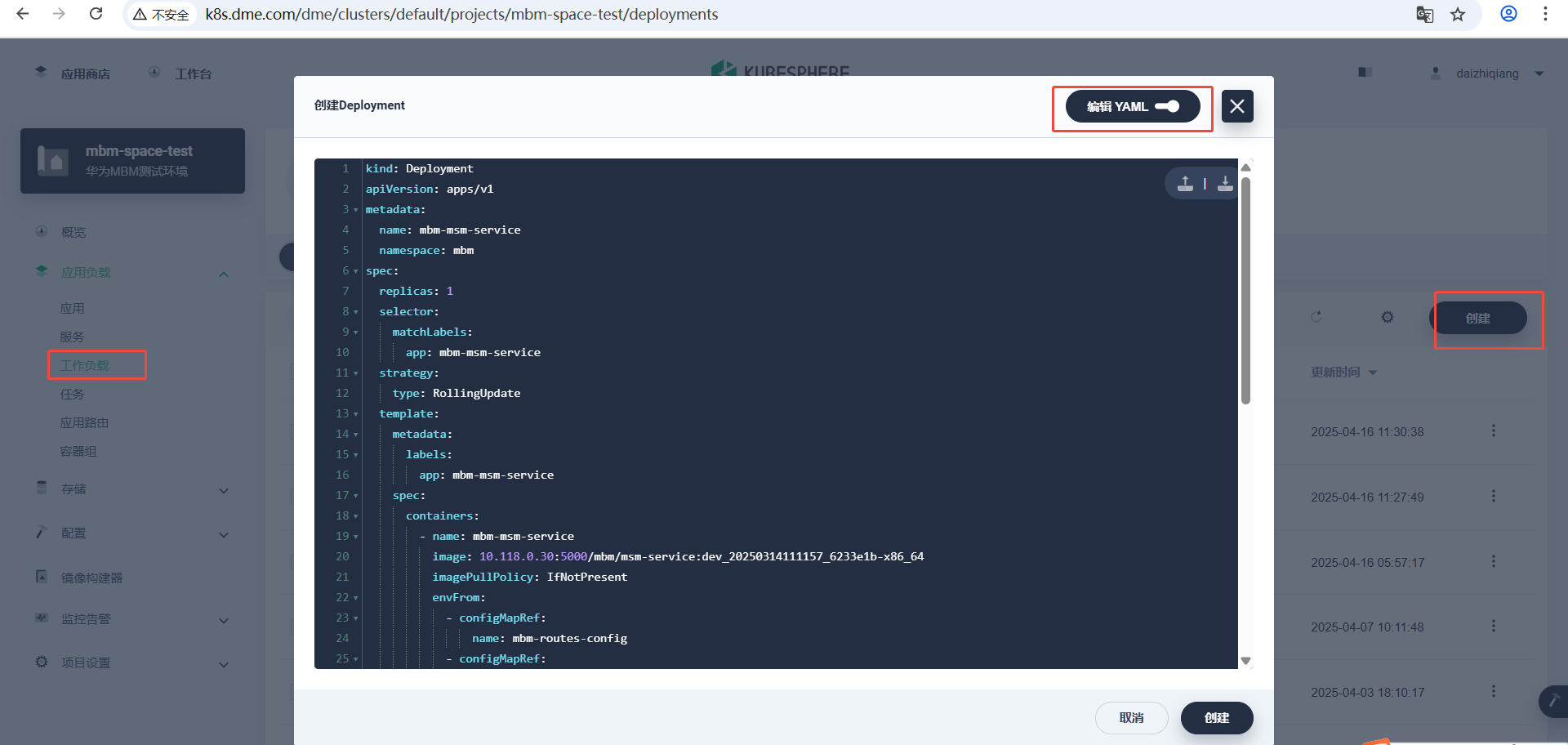

5.3.2 部署mdm相关Deployment

依次使用kubesphere 控制台将 api-getway-service, designer-service, em-service, label-service, les-service, mdm-service, mom-web, msm-service, orche-web, qm-service, sfc-service, wom-service 等多个目录下的 deployment.yaml 文件进行部署.

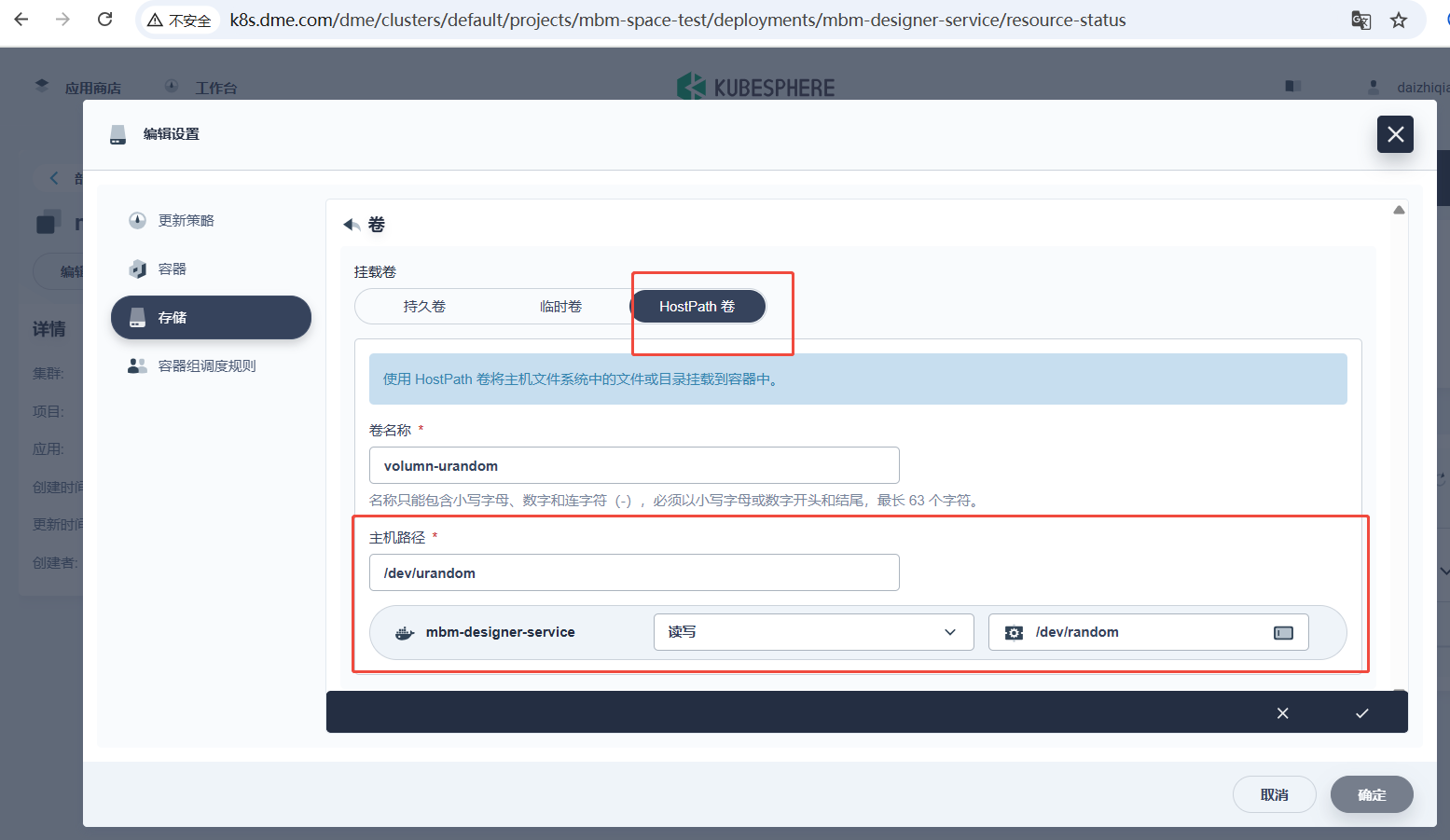

注意: 将所有mbm后端服务都挂载宿主机的/dev/urandom 设备节点到容器的/dev/random 文件

api-getway-service, designer-service, em-service, label-service, les-service, mdm-service,msm-service, qm-service, sfc-service, wom-service 上执行挂载, 解决随机数死锁的问题

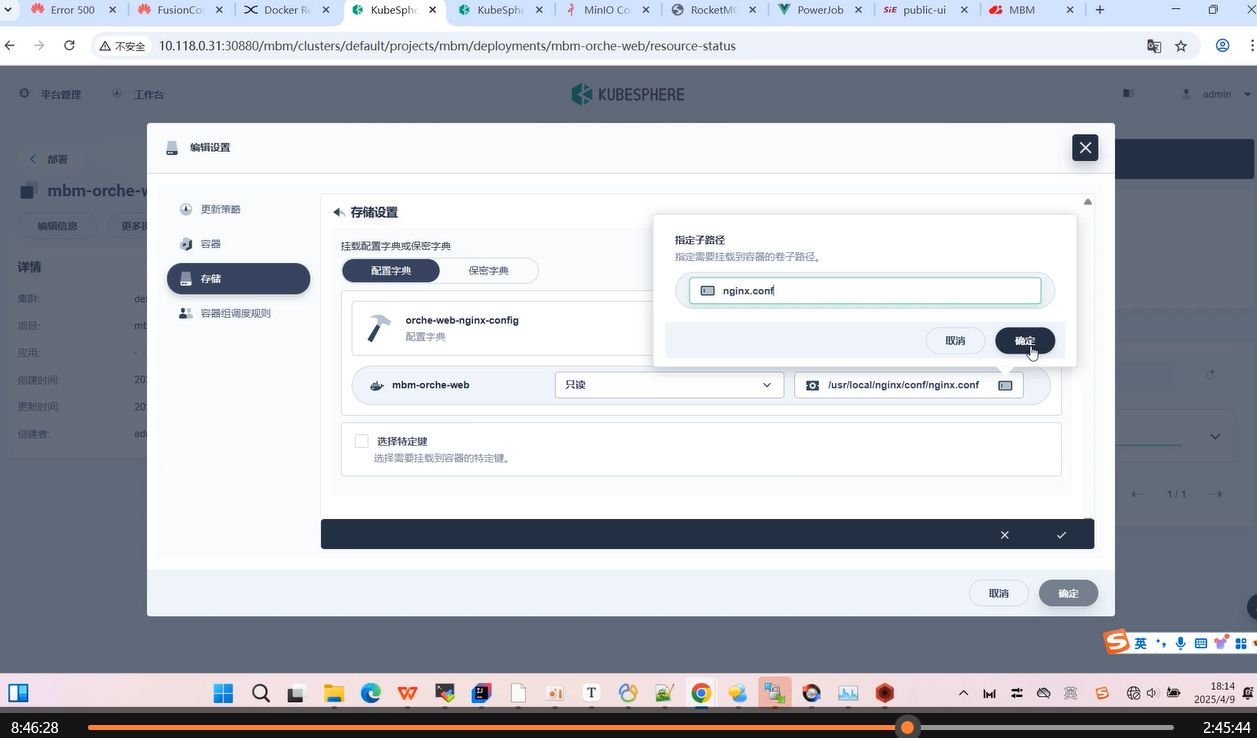

华为遗漏点 mbm-orche-web没有挂载nginx配置

mbm-orche-web 这个工作负载, 挂载 orche-web-nginx-config 里的nginx.conf key 值到 /usr/local/nginx/config/nginx.conf 文件上。

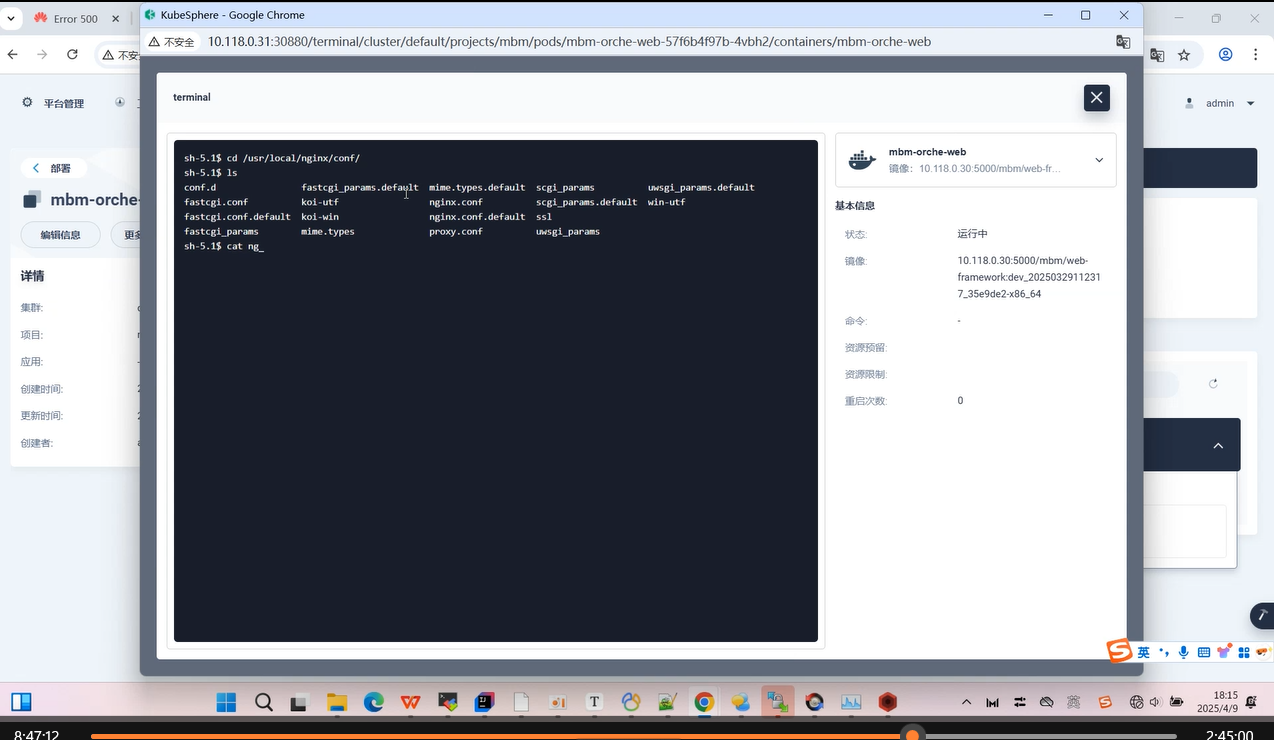

确认 /user/local/nginx/conf/nginx.conf 文件内容已被正确替换。

5.4 mdm数据初始化

使用Postman 调用接口 **POST **http://10.118.0.31:30670/imes/msmService/v1/tenant 其中 10.118.0.31 为master1 IP, 30670是华为mdm gateway-service 的NodePort, 请求参数如下, content-type 为 application/json

{

"client_id":"",

"client_secret":"",

"created_by":"admin",

"created_by_name":"admin",

"created_date":"2025-01-16",

"domain_id":"067cfa58c8404a908780bda7e934e1b1",

"effective_time":"2025-01-16",

"expired_time":"2099-12-30",

"home_url":"",

"id":"ghgf600523",

"is_use":"1",

"org_id":"067cfa58c8404a908780bda7e934e1b1",

"org_name":"贵州贵航红阳机械",

"project_id":"1",

"site_id":"-1",

"site_name":"default site",

"user_num":35

}

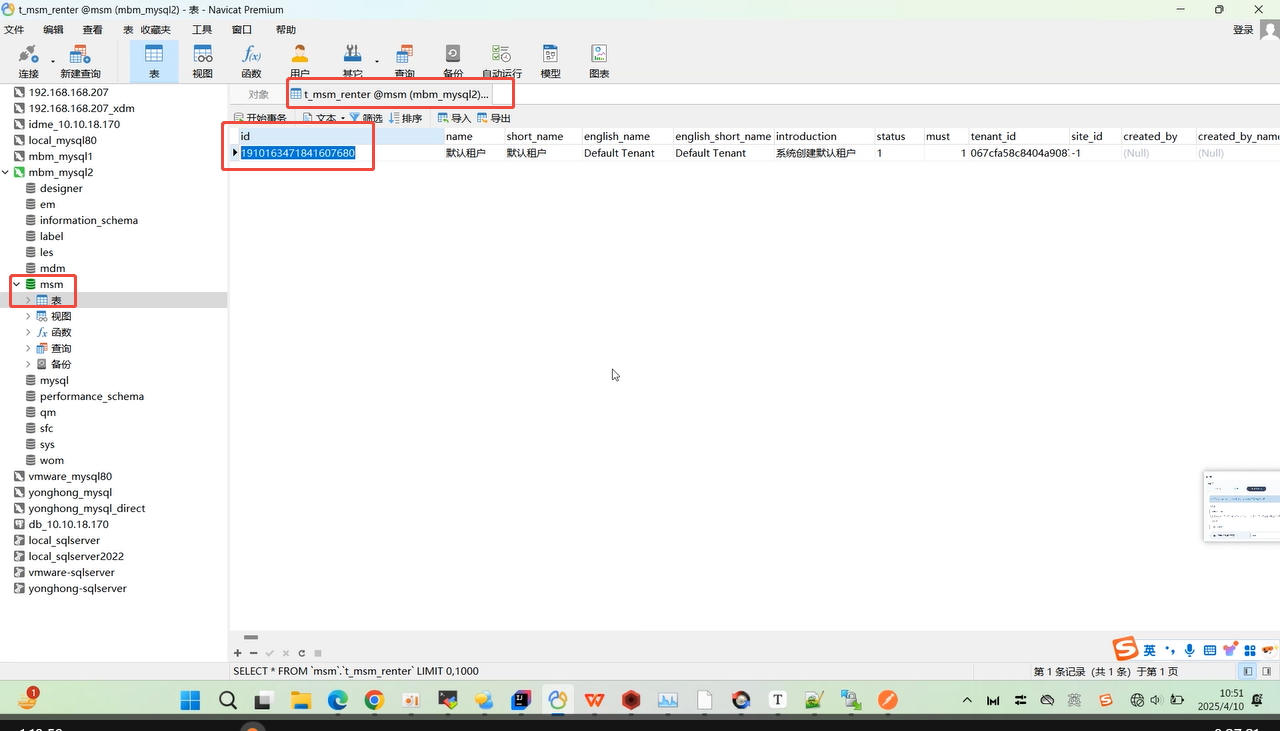

查看 msm 数据库的 t_msm_renter 表, 将刚才那条数据id 记录下来:

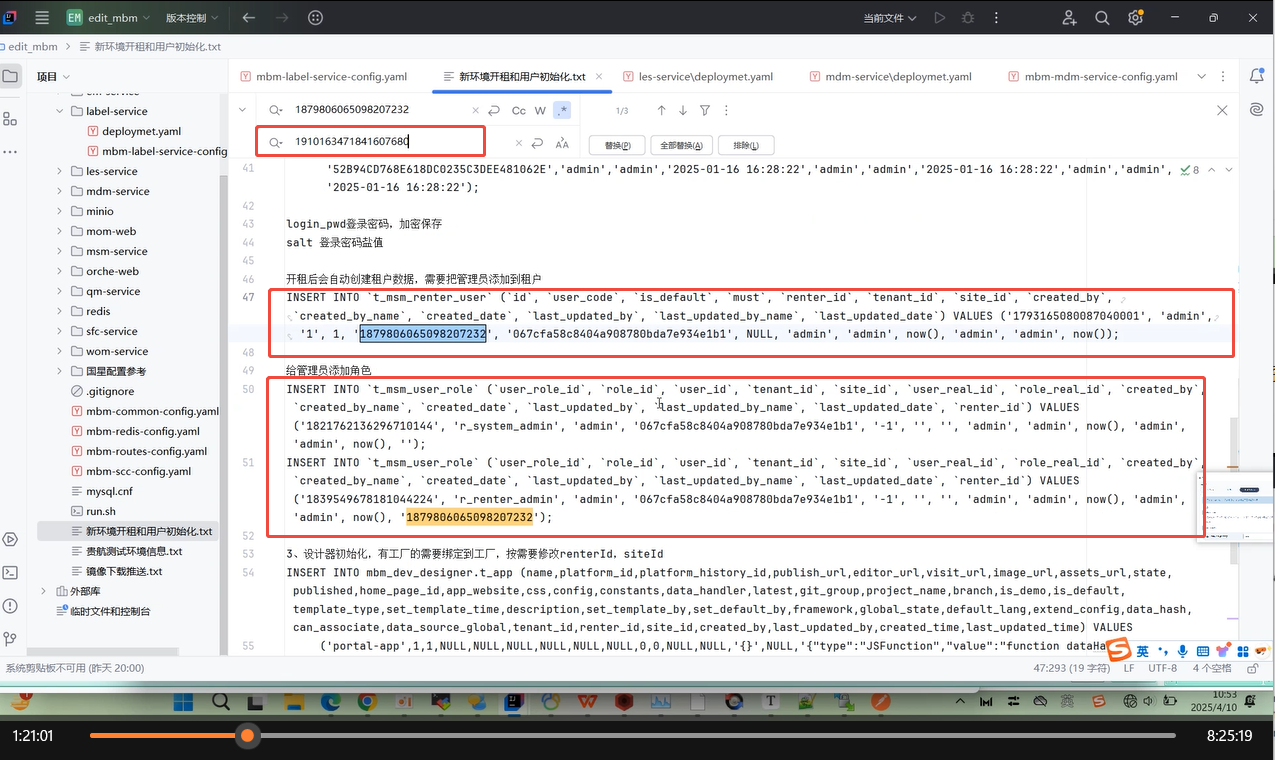

打开 "新环境开组和用户初始化" 这个文件, 找到msm 表中原有的renderId, 将其替换成 t_msm_renter 表里那个id, 并往msm库刷入以下的四条数据。

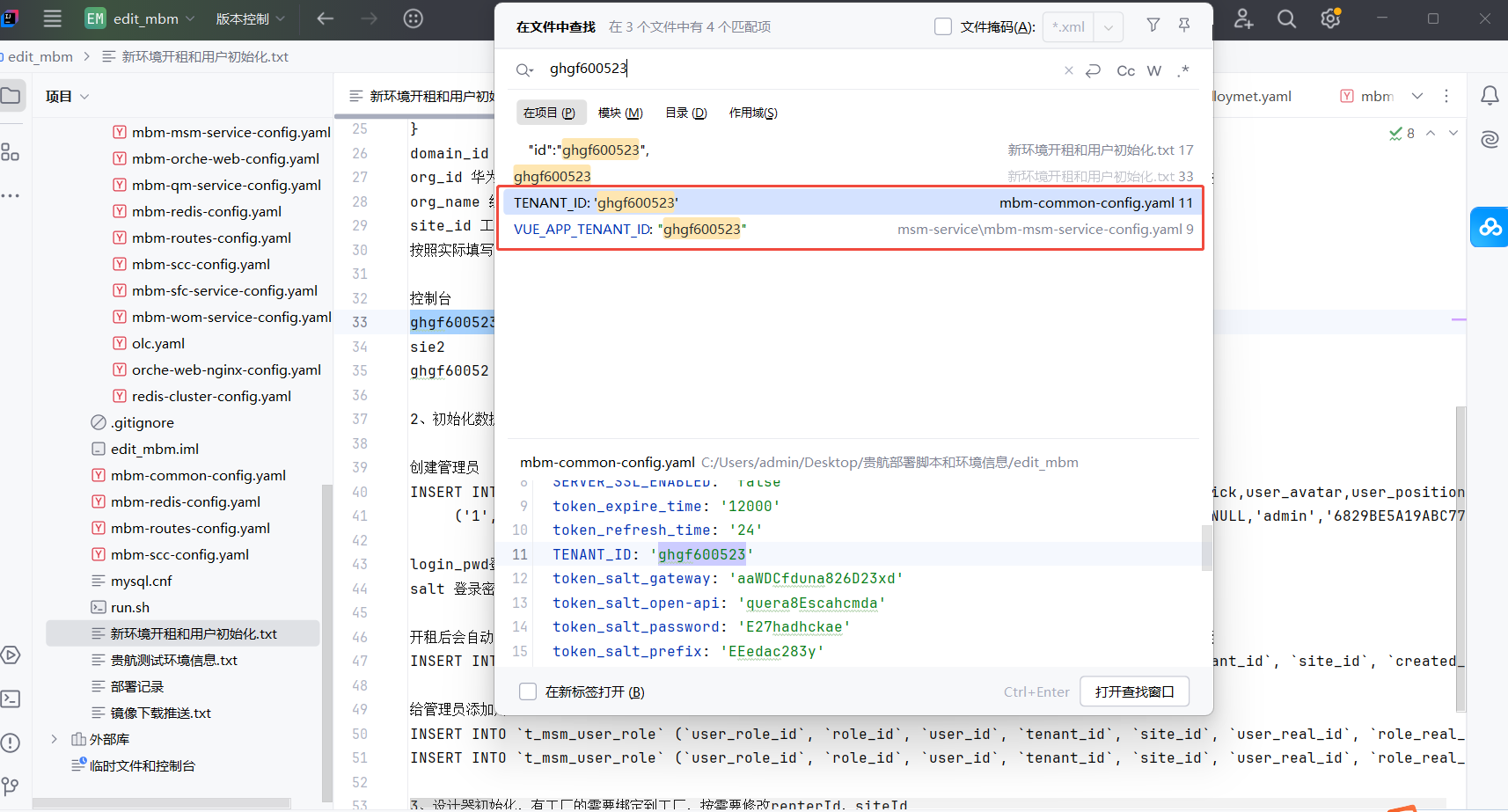

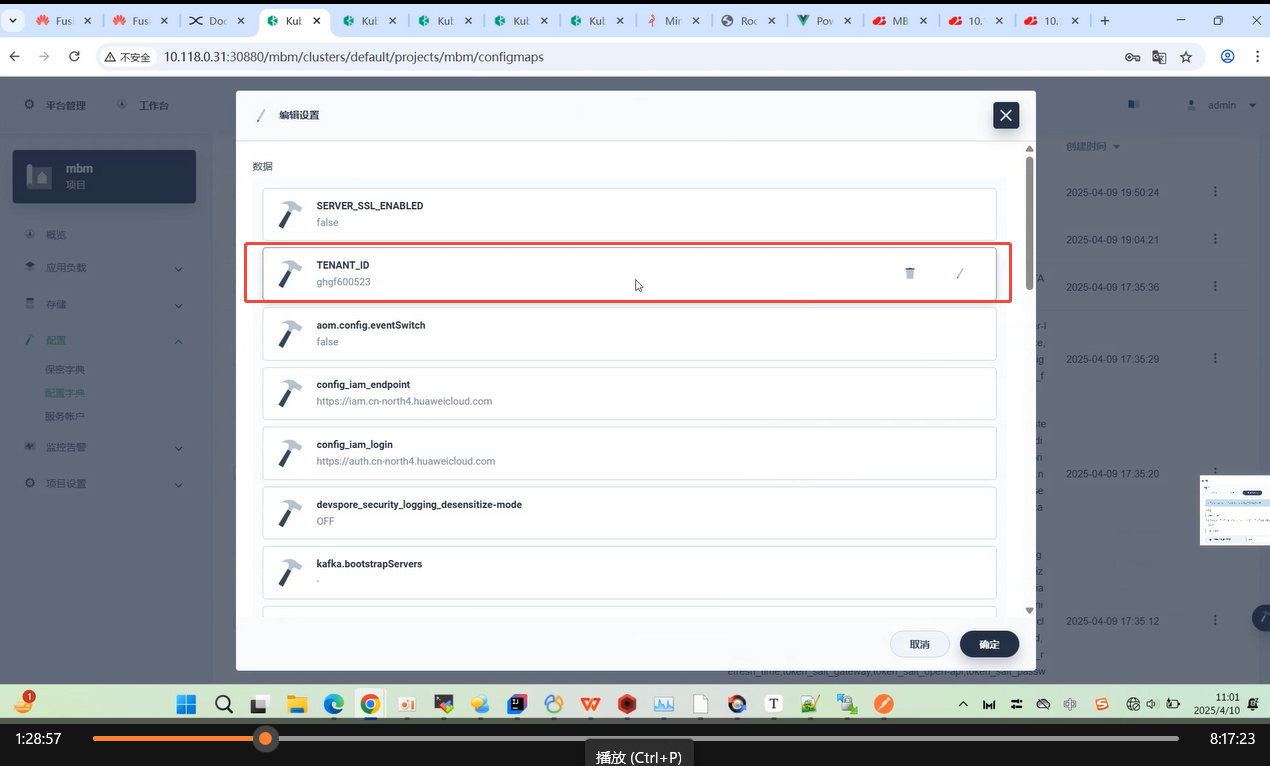

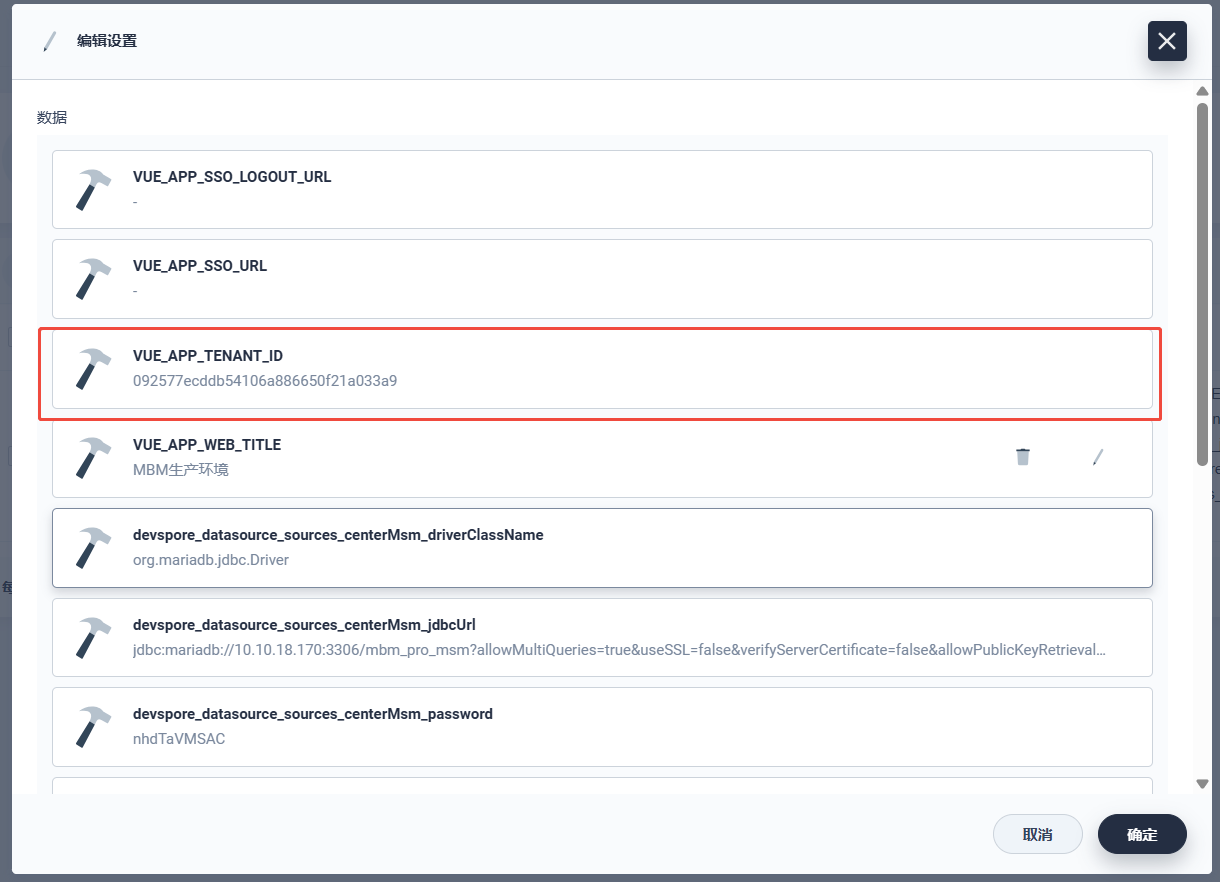

查询"ghgf600523" 关键字,将mbm-common-config.yaml, mbm-msm-service-config.yaml 的TENANT_ID, VUE_APP_TENANT_ID 更改为 t_msm_renter 表数据的tenant_id 字段的值 067cfa58c8404a908780bda7e934e1b1。更新到关联的ConfigMap, 并重启所有微服务。

使用 管理员账号 admin/Ghgf@1234 登录联建环境

5.5 整合mdm, imom 项目的连接配置

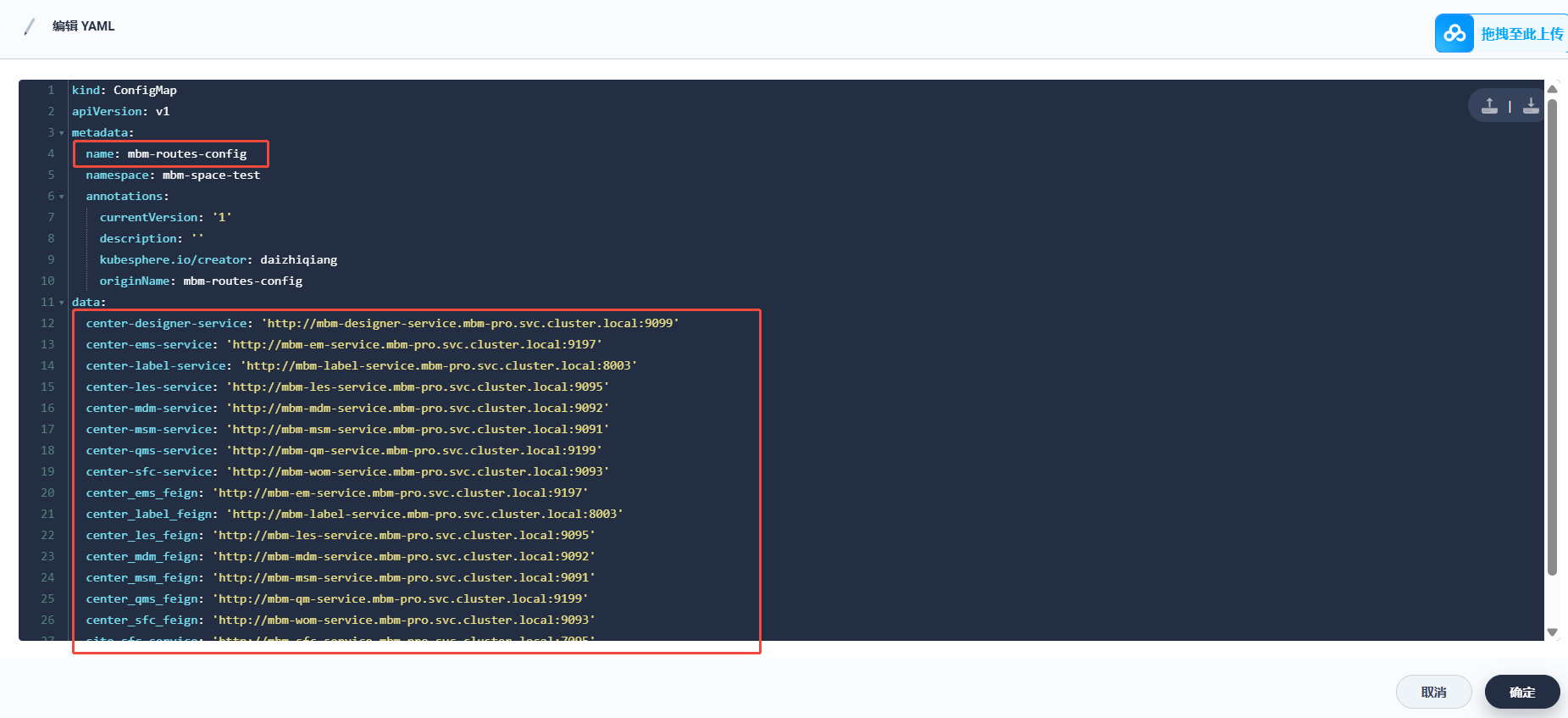

查看 mbm-routes-config, 检测mdm 项目路由到 imom 的配置是否正确: